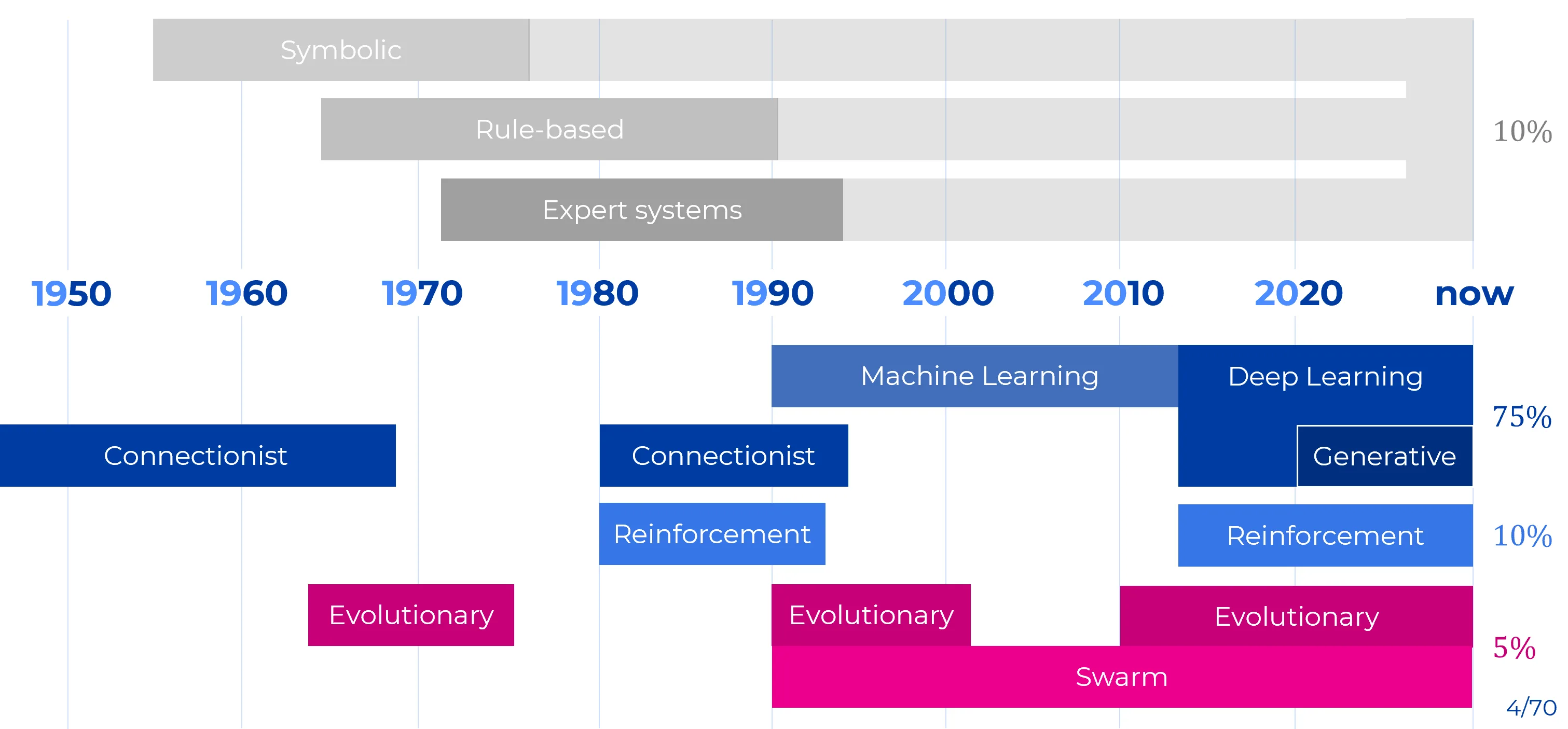

Timeline Overview: The Major Paradigms of Artificial Intelligence

Artificial Intelligence has never been a single unified methodology. Its development is better understood as the interaction of several major paradigms, each built around a different answer to a foundational question: how knowledge should be represented, how learning should occur, how decisions should be made, and how complex solutions should be discovered.

Scope of the Overview

This note offers a historical and conceptual map of the major paradigms of Artificial Intelligence from the mid-twentieth century to the present.

It emphasizes:

- their core methodological commitments

- their historical periods of greatest influence

- their distinct scientific strengths and limitations

- and the increasing tendency of modern AI to emerge through hybridization rather than replacement

Methodological Clarification

The paradigms listed below are not all of the same type.

- Symbolic AI, Statistical Learning, and Connectionism are broad traditions of representation and learning.

- Reinforcement Learning is primarily a framework for learning through interaction and reward.

- Evolutionary and Swarm methods are families of optimization and search procedures.

Their historical interaction is therefore best understood as overlap and recombination, not as a simple tournament with a single winner.

A Conceptual Map of the Field

| Paradigm | Foundational Question | Core Commitment | Historical Peak | Contemporary Status |

|---|---|---|---|---|

| Symbolic AI | How can intelligence be expressed through explicit knowledge and formal reasoning? | Symbols, logic, rules, structured inference | 1950s-1980s | Specialized but indispensable |

| Statistical Machine Learning | How can predictive structure be inferred from data under uncertainty? | Probability, geometry, estimation, regularization | 1990s-2010s | Foundational and still highly active |

| Connectionism / Deep Learning | How can internal representations be learned through adaptive networks? | Distributed representations, end-to-end learning, scalable optimization | 2012-present | Dominant paradigm |

| Reinforcement Learning | How should an agent act to maximize long-term reward? | Interaction, delayed feedback, sequential decision-making | 1990s-present, with major surges after 2013 | High-value specialized paradigm |

| Evolutionary / Swarm Intelligence | How can strong solutions emerge without gradients or explicit symbolic models? | Population-based search, selection, decentralized optimization | 1960s-present | Durable niche with strategic importance |

A Non-Linear History

The history of AI is not a sequence in which one paradigm fully replaces another. More often, older paradigms lose cultural centrality while retaining technical importance, later reappearing as components inside broader hybrid systems.

1950s-1980s | The Symbolic Paradigm

The symbolic tradition, often called Classical AI or GOFAI (Good Old-Fashioned AI), treated intelligence as the manipulation of explicit symbols according to formal rules. In this view, cognition is fundamentally structured: if the correct symbolic representation and inference procedure are available, intelligent behavior can in principle be derived through reasoning.

| Sub-current | Core Methodology | Historical Role |

|---|---|---|

| Symbolic AI / GOFAI | Logic, symbolic representation, recursive search | Early dominant paradigm of AI |

| Rule-based Systems | Explicit IF-THEN rules, deterministic inference | Practical industrial automation |

| Expert Systems | Knowledge bases + inference engines | Commercial success in the 1970s-1980s |

| Automated Reasoning | Deduction, theorem proving, satisfiability | Formal reasoning and verification |

The strength of symbolic AI lies in explicit structure. Knowledge is interpretable, rules can often be audited, and formal guarantees are sometimes possible. This makes symbolic methods especially valuable where correctness and compositional control are essential.

Its limitations are equally clear. Symbolic systems tend to be brittle in noisy environments, expensive to author by hand, and poorly suited to perception-heavy tasks such as vision and speech, where the relevant structure is not naturally given in symbolic form.

Enduring Contribution

Symbolic AI established one of the deepest questions in the field: whether intelligence is fundamentally a matter of structured reasoning over explicit representations.

Even where symbolic systems are no longer dominant, this question continues to shape research on reasoning, planning, verification, and neuro-symbolic integration.

1990s-2010s | Statistical Machine Learning and Probabilistic AI

The rise of statistical machine learning shifted the emphasis from hand-authored rules to inference from data. Rather than asking how to encode intelligence symbolically, this paradigm asked how predictive structure could be estimated from examples using probability theory, geometry, and optimization.

| Sub-current | Representative Methods | Scientific Emphasis |

|---|---|---|

| Probabilistic Modeling | Bayesian networks, Hidden Markov Models, graphical models | Uncertainty, latent structure, inference |

| Kernel Methods | Support Vector Machines, kernel ridge regression | Geometric decision boundaries in high-dimensional spaces |

| Classical Supervised Learning | Logistic regression, decision trees, random forests, boosting | Generalization from engineered features |

| Unsupervised Statistical Methods | PCA, mixture models, clustering | Structure discovery without labels |

This paradigm made several lasting contributions to AI:

- explicit treatment of uncertainty

- rigorous thinking about generalization

- principled regularization

- strong performance in small-data, tabular, and structured settings

- mature frameworks for latent-variable modeling

Its limitations became more visible in domains where hand-engineered features were inadequate and where raw unstructured data, such as images, audio, and text, demanded richer internal representation learning.

Historical Significance

Deep learning did not replace an empty field. It emerged after decades of progress in statistical learning, and it inherited many of its central concerns:

- optimization

- regularization

- generalization

- probabilistic modeling

- latent representation

1940s-Present | Connectionism and the Neural Tradition

Connectionism approaches intelligence as an emergent property of large networks of simple adaptive units. The central claim is that rich behavior need not be explicitly programmed if the system can learn sufficiently expressive internal representations from data.

| Phase | Historical Meaning |

|---|---|

| 1940s-1960s | Early neural abstraction: McCulloch-Pitts, Hebbian ideas, Perceptrons |

| 1980s-1990s | Revival through backpropagation and multi-layer networks |

| 2006-2011 | Pre-deep-learning transition: unsupervised pretraining, improved initialization, GPU experimentation |

| 2012-present | Deep learning era: CNNs, RNNs, Transformers, large-scale representation learning |

| 2022-present | Foundation model era: LLMs, diffusion models, multimodal and agentic systems |

The defining strength of connectionism is representation learning. Neural systems do not merely fit outputs to inputs; they learn internal spaces in which the problem itself becomes easier to solve. This is why deep learning succeeded so decisively in perception-heavy and generative domains.

| Core Technical Property | Why It Matters |

|---|---|

| Distributed representations | Concepts are encoded across many parameters and activations rather than in isolated symbolic slots |

| Feature learning | High-level abstractions can emerge directly from raw data |

| End-to-end differentiability | Entire pipelines can be optimized jointly |

| Scalability | Performance often improves as data, model size, and compute increase |

| Architectural flexibility | The same paradigm supports CNNs, RNNs, Transformers, GNNs, autoencoders, and generative models |

Why Connectionism Became Dominant

Connectionism became the central paradigm of modern AI because it combined three unusually powerful properties:

- it learns from data rather than relying on handcrafted features,

- it scales with modern hardware,

- and it can be specialized into many architectures without abandoning its core principles.

A Deeper Reading

The victory of deep learning was not merely empirical. It marked a methodological shift from explicitly specified intelligence to learned internal organization.

1980s-Present | Reinforcement Learning

Reinforcement Learning (RL) studies how an agent should choose actions so as to maximize cumulative reward over time. It differs from standard supervised learning because the correct action is not usually given in advance; it must be discovered through interaction, exploration, and delayed feedback.

| Phase | Key Developments |

|---|---|

| 1980s-1990s | Temporal-Difference learning, Q-learning, policy-gradient foundations |

| 2013-2016 | DQN, AlphaGo, deep RL as a major public breakthrough |

| 2018-present | MuZero, robotics, large-scale simulation, RLHF for language-model alignment |

RL is not a rival representational theory in the same sense as symbolic AI or connectionism. It is better understood as a learning framework for agency. It can be combined with symbolic world models, neural policies, search procedures, or preference-based optimization.

Its enduring importance lies in formalizing one of the hardest problems in AI: how to act under uncertainty when feedback is delayed and consequences unfold over time.

Distinctive Contribution

Reinforcement Learning introduced the idea that intelligence is not only about representation or prediction, but also about policy formation, credit assignment over time, and goal-directed adaptation.

1960s-Present | Evolutionary Computation and Swarm Intelligence

Evolutionary and swarm-based methods solve problems through population-level search rather than gradient descent or symbolic derivation. Their central idea is that useful structure can emerge through variation, selection, recombination, or decentralized collective behavior.

| Family | Primary Utility | Representative Methods |

|---|---|---|

| Genetic Algorithms | Combinatorial optimization and design search | Mutation, crossover, selection |

| Evolution Strategies | Gradient-free optimization and policy search | CMA-ES, OpenAI-ES |

| Swarm Intelligence | Decentralized search and coordination | Particle Swarm Optimization, Ant Colony Optimization |

These approaches are especially valuable when:

- the objective is discontinuous or poorly behaved

- gradients are unavailable or misleading

- the search space includes structure not easily captured by gradient-based learning

- multiple candidate solutions must be explored in parallel

Enduring Role

Evolutionary computation remains strategically important in:

- hyperparameter optimization

- neural architecture search

- engineering design

- robotics and morphology optimization

- robust search in difficult non-differentiable spaces

The Present Landscape: Convergence Rather Than Replacement

The contemporary AI landscape is increasingly shaped by hybrid systems. The most powerful systems no longer fit neatly within a single historical paradigm.

| Hybrid Configuration | Meaning |

|---|---|

| Deep RL | Neural representation learning combined with reward-driven control |

| Neuro-symbolic AI | Learned representations combined with logical or symbolic structure |

| Retrieval-augmented systems | Neural models coupled to external memory or knowledge stores |

| Model-based agents | Predictive world models combined with planning |

| Evolutionary AutoML / NAS | Evolutionary search used to optimize neural systems |

This is the crucial point. The frontier of AI is no longer defined simply by choosing between symbols, statistics, networks, or search. It is increasingly defined by the selective integration of their strengths.

The Strong Form of the Historical Lesson

AI did not evolve by discovering one final paradigm and discarding all previous ones. It evolved by repeatedly discovering that each paradigm solves some problems elegantly and fails on others.

Why This Matters Conceptually

Symbolic AI contributes explicit structure. Statistical learning contributes uncertainty and regularization. Connectionism contributes scalable representation learning. Reinforcement learning contributes agency and sequential adaptation. Evolutionary methods contribute gradient-free exploration and search.

Interpretive Conclusion

A serious understanding of AI requires resisting two simplifications.

The first is the symbolic-era simplification: that intelligence can be reduced to explicit rules. The second is the deep-learning-era simplification: that current neural dominance exhausts the conceptual space of intelligence.

Neither is adequate.

Key Takeaway

The history of AI is best understood as a compounding history of paradigms, not a sequence of absolute replacements.

- Symbolic AI made structure and formal reasoning central.

- Statistical learning made uncertainty, estimation, and generalization central.

- Connectionism made representation learning and scalable optimization central.

- Reinforcement learning made agency and long-term decision-making central.

- Evolutionary methods made gradient-free exploration central.

Contemporary AI is increasingly built at the intersection of these traditions. Deep learning is the dominant substrate of the present, but the strongest future systems are likely to be those that combine neural representation learning with structure, memory, planning, and adaptive interaction.