Choosing an optimizer

Important

There is no universally best optimizer. What exists in practice are:

- strong defaults,

- recurring empirical tendencies,

- and task-dependent exceptions.

For this reason, optimizer choice should be treated as a disciplined empirical decision, not as a matter of doctrine.

Empirical benchmark

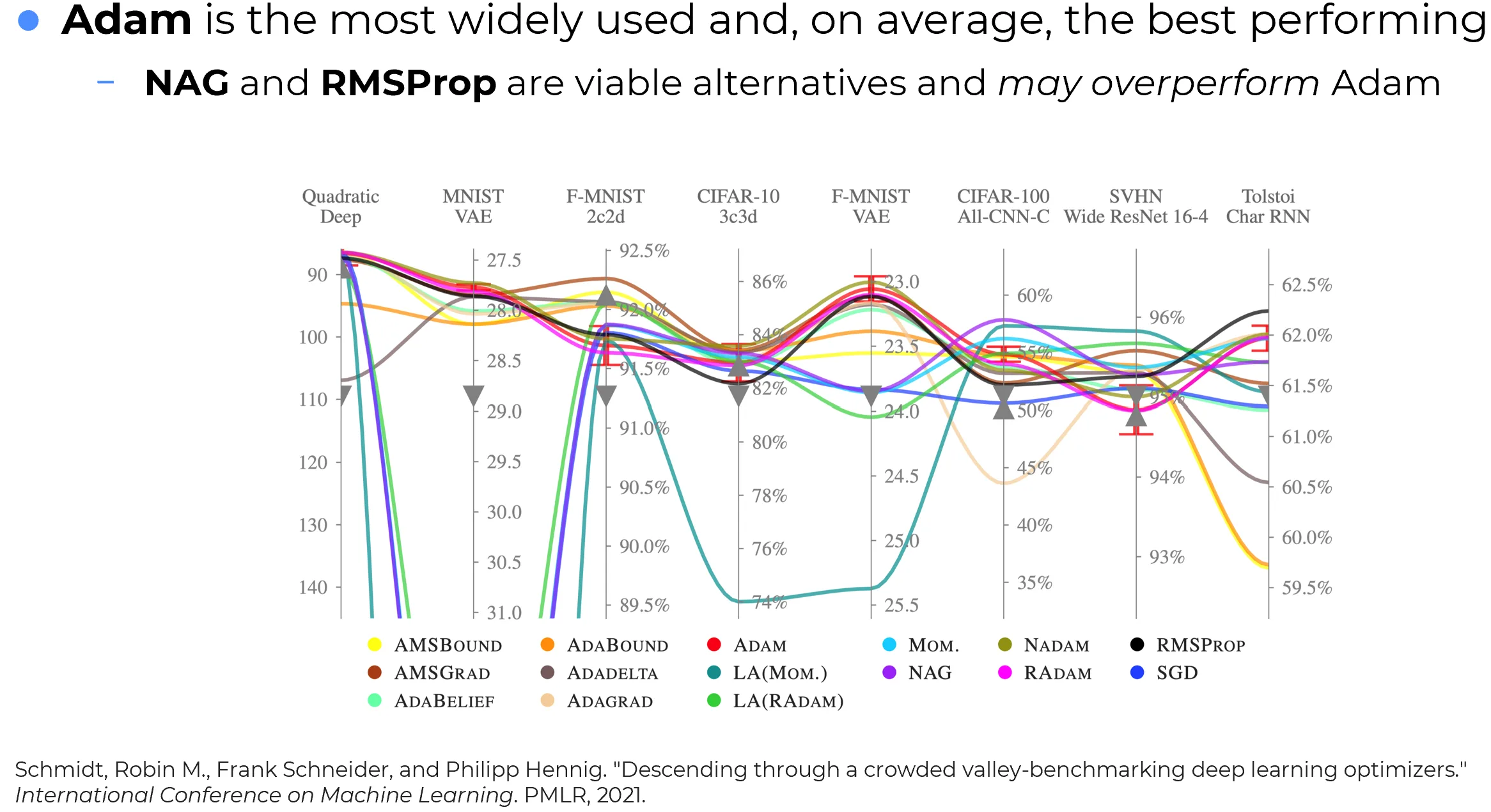

The figure below summarizes an empirical comparison across multiple tasks and neural-network architectures.

A careful reading of this benchmark supports a balanced conclusion:

- Adam achieves the strongest average performance across the benchmark considered;

- NAG and RMSProp remain strong competitors and can outperform Adam on specific tasks;

- no optimizer dominates uniformly on every problem.

How this figure should be interpreted

The statement “Adam performs best on average” is an aggregate empirical statement, not a theorem. It means that, after averaging over the tasks in the benchmark, Adam gave the best overall result. It does not mean that Adam is always the best choice for every dataset, architecture, or training regime.

First practical conclusion

When no strong task-specific prior is available, Adam is one of the safest starting points. But it should be treated as a strong default, not as a reason to stop comparing optimizers altogether.

A practical shortlist

In serious experiments, it is usually better to compare a small set of optimizers with genuinely different behaviors than to rely blindly on a single default.

A strong shortlist to try first

At the start of a new project, it is usually sensible to compare at least:

- Adam or AdamW

- SGD with Momentum or NAG

- RMSProp

These three choices cover distinct optimization philosophies:

- Adam / AdamW: adaptive, fast, and usually very effective from random initialization;

- SGD + Momentum / NAG: less adaptive, often slower at the beginning, but frequently competitive or superior in late-stage training and final generalization;

- RMSProp: adaptive and often robust when gradient magnitudes vary strongly across parameters or across time.

Adam vs AdamW

If weight decay is required, the modern practical default is usually AdamW, not plain Adam with L2 mixed into the gradient. AdamW decouples weight decay from Adam’s adaptive rescaling and is therefore the cleaner choice in most modern training pipelines.

Adam as a strong default

Adam is particularly effective when training begins from random initialization.

In that regime:

- gradient scales can differ substantially across parameters;

- the optimization trajectory is still far from a well-formed basin;

- rapid coordinatewise adaptation is often highly beneficial.

Because Adam combines:

- a first-moment EMA in the numerator,

- a second-moment EMA in the denominator,

- and bias correction during the startup phase,

it often makes rapid progress from the very first iterations.

Info

This is one of the main reasons Adam became such a dominant default in deep learning practice: it tends to work well immediately, often with less learning-rate fine-tuning than SGD-based methods.

Late-stage training and fine-tuning

The situation changes once the network is no longer at random initialization.

Suppose that:

- the model has already been trained,

- the parameters already lie in a reasonably good region of the loss landscape,

- and the goal is no longer broad exploration, but rather controlled exploitation.

In that regime, Adam may still work well, but its advantages are no longer automatic.

The correct concern is not the vague claim that Adam “emphasizes some neurons”. The more precise point is this:

- Adam rescales updates coordinatewise using recent gradient statistics;

- this strong adaptivity can accelerate fitting to the new objective or new dataset;

- but that same aggressiveness can sometimes move the model toward a solution that adapts quickly while preserving less of the structure learned before, or generalizes less well.

By contrast, SGD with Momentum or NAG often induces a more conservative trajectory in late-stage training. In some settings, this leads to better final generalization or more stable fine-tuning behavior.

Fine-tuning takeaway

In fine-tuning or late-stage exploitation, Adam should not be assumed to be automatically optimal. Its adaptive speed can be an advantage, but in some cases SGD + Momentum/NAG yields a better final trade-off between adaptation and preservation.

Important nuance

This is an empirical tendency, not a hard law. The outcome depends on:

- the amount of new data,

- the similarity between pretraining and target data,

- the learning-rate schedule,

- the regularization strategy,

- and the extent of adaptation desired.

Recommended decision rule

A pragmatic decision rule is the following:

- Start with Adam or AdamW when training from scratch and a strong default is needed.

- Include SGD + Momentum/NAG in the comparison whenever final generalization matters or fine-tuning is involved.

- Include RMSProp when adaptive methods are desired and prior experience suggests it behaves well on the task family.

- Treat optimizer choice as a hyperparameter-selection problem, not as a fixed ideological commitment.

Summary

The most defensible practical view is this:

- Adam is one of the strongest and most reliable default optimizers in deep learning.

- NAG and RMSProp remain serious alternatives and can outperform Adam in specific regimes.

- No optimizer is uniformly best across all tasks.

The right habit is therefore not blind loyalty to one optimizer, but a short and well-designed comparison among a few strong candidates.