Dropout

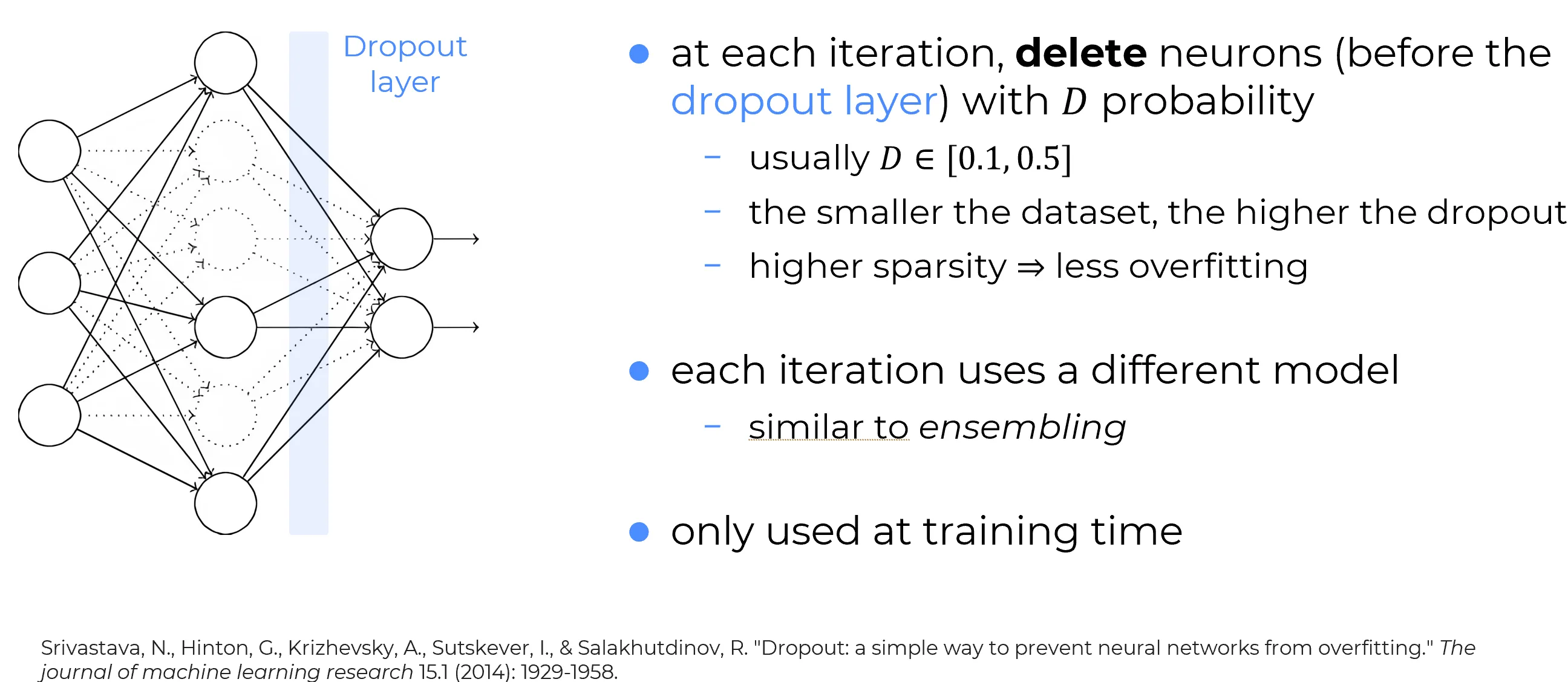

Dropout is a stochastic regularization technique conceptually very different from L2 regularization. Instead of modifying the loss by adding an explicit penalty term, it regularizes learning by randomly removing part of the network during training.

1. Training a neural network with dropout

Consider a supervised training pair , where denotes the target.

Target notation

To remain consistent with the notation used in

loss-functions:

- in binary settings, the target is often written as a scalar ;

- in multiclass softmax / NLL settings, the target is typically represented as a one-hot vector .

So the notation is being used here in a generic sense.

| Before Dropout | After Dropout |

|---|---|

|  |

| In the standard setting, one performs a forward pass of the input through the full network, followed by backpropagation to compute the gradient contribution of that example or mini-batch. | With dropout, one first samples a random binary mask and temporarily deactivates part of the hidden units. The input and output layers are typically left unchanged, while the hidden computation graph is thinned for that training step. |

Reading the diagram

In the diagram, the dropped neurons are still shown in transparency (ghosted). This highlights an important point: the architecture itself has not been destroyed; only the active subnetwork used in the current optimization step has changed.

In its simplest form, dropout acts on a hidden activation vector

by sampling independent Bernoulli variables

and collecting them into a mask vector

The masked activations are then

where denotes elementwise multiplication. Equivalently, componentwise,

Here is the keep probability.

What is the dropout mask?

The vector is called the dropout mask. It is a binary selector that decides, for the current training step, which activations survive and which are set to zero.

- if , the unit is kept;

- if , the unit is dropped for that step.

So the mask does not change the architecture permanently. It only specifies which part of the layer is active in the current sampled subnetwork.

Keep probability vs. dropout rate

Many libraries expose the dropout rate

instead of the keep probability . For example,

dropout=0.5usually means that each selected activation is zeroed with probability , hence kept with probability .

If , then on average half of the selected hidden units remain active at each step.

This is a common choice, but it is not the definition of dropout. Different layers may use different keep probabilities, and in classical implementations the input layer, when regularized, is often assigned a milder dropout rate than hidden layers.

2. Training procedure with dropout

Once the mask has been sampled, the forward and backward passes are executed on the resulting thinned network.

- Sample a fresh binary mask for the chosen layer activations.

- Zero the units selected by the mask.

- Run forward propagation and backpropagation through this thinned network.

- Update the shared parameters using the resulting gradient estimate.

Then the process is repeated with a new random mask at the next optimization step.

Conceptually, the network is therefore trained under a continuously changing computational graph.

The learned parameters must work well not for one single fixed hidden configuration, but for many randomly perturbed configurations.

Shared parameters

A subtle but crucial point is that dropout does not train a completely different set of parameters for every mask. All these thinned networks share weights and biases. That weight sharing is what makes dropout computationally feasible.

Mental model

A useful mental model is: training with dropout means repeatedly training one shared network under many random partial failures. The network is therefore pushed to distribute information more robustly across its hidden representation.

3. Test-time inference

Test-time rule

At inference time, one does not sample dropout masks. The model is evaluated as a deterministic network.

This creates an immediate question: during training, each unit is active only with probability , while at test time all units are present.

Without correction, the activations would be systematically larger at inference than during training.

For the classical formulation

one compensates by scaling activations by at test time, or equivalently by scaling the outgoing weights by .

Why does this make sense? Because

So the deterministic test-time scaling aligns the expected activation level seen during training with the one used during inference.

Inverted dropout

Modern libraries usually implement inverted dropout instead:

In that convention,

so no additional scaling is needed at test time.

This is the most common implementation in practice.

Why expectation matching is not the whole story

The expectation argument is exact only up to the next affine transformation.

Suppose the masked activations are fed into the next layer through

Then expectation passes through this affine map:

So if , replacing the random mask by its mean really does give the correct expected pre-activation at the next layer.

The difficulty starts after the nonlinearity:

For a generic nonlinear activation , one has in general

So the deterministic scaled network need not equal the exact average prediction of all dropout subnetworks once further nonlinear layers are involved.

This is the key point: dropout scaling matches the average behavior exactly at the affine level, but in a deep nonlinear network the full test-time model is usually only an approximation to exact ensemble averaging.

4. The magic behind dropout

Why should dropout help?

Why should randomly deleting part of the network during training improve generalization instead of simply making optimization harder?

The answer is best understood from two complementary viewpoints:

- dropout behaves like a computationally cheap form of ensemble averaging over a huge family of subnetworks;

- dropout discourages fragile co-adaptations between units and forces the network to learn features that remain useful under random perturbations.

Both viewpoints matter, but the ensemble perspective is the deeper one.

4.1. Dropout as approximate ensemble averaging

Each sampled mask defines a thinned network, denoted by

where is the collection of shared parameters.

In this high-level discussion, the layer superscript is suppressed and denotes the sampled dropout pattern that defines the current thinned subnetwork.

If a network has droppable units, then, in principle, there are exponentially many possible masks and therefore exponentially many possible thinned subnetworks.

This is the source of the famous statement that dropout is related to an ensemble of exponentially many networks.

However, this statement needs to be interpreted carefully.

An explicit ensemble would require:

- training each network separately,

- storing a separate parameter set for each network,

- averaging their predictions at inference.

Dropout does not do that.

Instead, dropout trains a single parameterized system whose many subnetworks all share weights.

At every optimization step, SGD samples one mask and therefore one thinned network, producing a stochastic estimate of the objective

In plain words, the same shared parameter set is trained so that it performs well on average across many randomly sampled subnetworks, rather than only for one fixed network realization.

This means that the learned parameters are optimized to perform well on average over the mask distribution.

What is ensemble-like, exactly?

The ensemble flavor comes from the fact that many different subnetworks contribute to learning and that the final predictor aims to reflect their collective behavior.

The mechanism is not classical bagging:

- the subnetworks are not independent;

- they are trained on the same data distribution;

- and, most importantly, they share parameters.

Why this matters

This is the real reason dropout is powerful: it borrows part of the statistical benefit of ensemble methods without paying the prohibitive cost of training and storing a large collection of separate models.

This weight sharing is the decisive idea.

It lets dropout capture part of the variance-reduction benefit of model averaging without paying the cost of training and storing a large explicit ensemble.

4.2. What happens at inference: exact statement vs. approximation

Each dropout mask specifies which units are kept and which are dropped.

Once the mask is fixed, the original network turns into one specific thinned subnetwork, which produces its own predictive distribution

What is a predictive distribution?

A predictive distribution is the vector of probabilities that the model assigns to the possible output classes for the input .

For example, in a 3-class problem, a prediction such as

means:

- probability for class 1,

- probability for class 2,

- probability for class 3.

These numbers are nonnegative and sum to , so they form a probability distribution over the classes.

What does "the average prediction of all dropout subnetworks" mean?

This expression refers to the following ideal thought experiment: for the same input , evaluate the prediction of every subnetwork generated by every possible mask , and then combine all those predictions according to the probability of sampling each mask.

If the ensemble interpretation were taken literally, the procedure would be:

- enumerate all possible masks ;

- run the corresponding subnetwork for each mask;

- combine all the resulting predictive distributions.

That would be a genuine ensemble over all subnetworks induced by dropout.

Here the word average is being used informally to mean the collective prediction obtained from all subnetworks. It does not automatically mean a simple arithmetic average of class probabilities. Indeed, in the exact special case discussed below, the correct combination turns out to be a normalized geometric mean.

Why the literal ensemble is not used at test time

The literal ensemble view is computationally hopeless in realistic networks. If there are many droppable units, the number of possible masks grows exponentially. So test-time dropout does not explicitly evaluate and combine all subnetworks.

Instead, it uses a single deterministic mean network:

- in the classical presentation, one keeps all units and scales activations or outgoing weights by the keep probability;

- in inverted dropout, the scaling is already done during training, so the test-time network is simply the full deterministic network.

What is the test-time network actually computing?

This is the central mathematical question behind the ensemble interpretation of dropout.

- Exact special case. If, after dropout, the masked representation is sent directly to one final linear classifier followed by a softmax, then the deterministic mean network is not merely heuristic: it computes exactly the normalized geometric mean of the predictive distributions of all thinned subnetworks.

- General deep nonlinear case. If additional nonlinear transformations appear after the dropped units, this exact identity no longer holds. In that setting, the deterministic network should be understood as a tractable approximation to full model averaging, not as an exact equality.

What is meant by "linear classifier followed by a softmax"?

This expression refers to the following situation: after the dropped hidden representation, the network does not apply another hidden nonlinear block. Instead, it performs only:

- a linear or affine transformation

- followed by a softmax that converts the logits into class probabilities.

So this is the cleanest possible output stage: a final score computation, and then softmax.

That special simplicity is exactly why the geometric-mean result can be proved there in closed form.

Why does a normalized geometric mean appear here?

For positive numbers , the arithmetic mean is

whereas the geometric mean is

In the dropout setting, each thinned subnetwork assigns a probability to class . In the exact special case above, these probabilities are combined multiplicatively, not additively. That is why the relevant object is a geometric mean rather than an arithmetic mean.

This is why the paper speaks of a normalized geometric mean: one first combines the subnetwork probabilities multiplicatively, then renormalizes across classes so that the final outputs still form a proper probability distribution.

Intuitively, this favors classes that are supported consistently across many subnetworks, not classes that receive high probability from only a small minority of them.

Key takeaway

This point is easy to misunderstand: dropout is ensemble-like in general, but the clean exact formula belongs only to a restricted architectural setting. Outside that setting, the ensemble interpretation remains conceptually useful, but mathematically approximate.

(Optional deep dive) Exact geometric-mean result for a softmax output layer

The three collapsible steps below prove the special-case statement above in a formal way.

(Optional deep dive) Step 1: setup and notation

Assume dropout is applied to a hidden activation vector , and that the output layer is a softmax fed by the affine map

For class , the masked subnetwork predicts

The deterministic test-time network can now be compared with the normalized geometric mean of these predictive distributions over the mask distribution .

(Optional deep dive) Step 2: the geometric mean becomes an average in log-space

Define

where normalizes over the classes.

Taking logarithms yields

Substituting the softmax formula gives

Now the key observation: the second term inside the brackets depends on and on the mask, but not on the class index . After renormalization over , it is absorbed into a common additive constant in log-space. Therefore,

and hence

(Optional deep dive) Step 3: affine logits turn the mask average into deterministic scaling

Because the logits are affine in the masked activations,

Using linearity of expectation,

Define the keep-probability vector

Then

so after normalization over ,

Now define the deterministic test-time logits by replacing the random mask with its mean :

The corresponding softmax prediction is

But by definition

so the numerator and denominator of coincide exactly with those of . Therefore,

Conclusion. When dropout acts on a representation that connects directly through an affine map to a softmax layer, the deterministic scaled network computes exactly the normalized geometric mean of the predictive distributions of the exponentially many thinned subnetworks.

This exact identity fails once further nonlinear transformations are inserted after the dropped units, which is why the ensemble interpretation becomes only approximate in deeper nonlinear architectures.

This is why the claim “dropout is just averaging many networks” is directionally correct but mathematically incomplete.

It is more precise to say:

Precise ensemble interpretation

Dropout trains a weight-sharing family of thinned networks and, at test time, replaces the intractable full model average with a deterministic approximation obtained by scaling activations or weights appropriately.

4.3. Why the geometric-mean viewpoint is interesting

The geometric mean interpretation is not a cosmetic detail. It reveals that the deterministic network is not simply “voting” like a naive arithmetic average.

If many subnetworks assign very low probability to a class, the geometric mean suppresses that class strongly.

So the final predictor favors outputs that are consistently supported across many subnetworks, not merely rescued by a few highly confident ones.

This helps explain why dropout often yields predictions that are less tied to brittle, overly specialized internal feature combinations.

4.4. Dropout vs. bagging

Dropout vs. bagging

It is useful to compare dropout with classical ensemble methods such as bagging.

- Bagging trains many independent models, often on bootstrap-resampled datasets, and averages their predictions.

- Dropout trains many implicitly defined subnetworks inside one shared model and averages them only approximately.

- Bagging spends memory and computation on model independence.

- Dropout gives up independence, but gains enormous efficiency through parameter sharing.

So dropout should be viewed as an ensemble-inspired regularizer, not as a literal replacement for a fully trained ensemble.

5. A second viewpoint: preventing co-adaptation

The original motivation behind dropout was also expressed in terms of preventing complex co-adaptations among feature detectors.

Without dropout, a hidden unit may become useful only because several specific partner units are also present and tuned in a highly specialized way.

This can produce brittle internal representations: the network performs well on the training set, but relies on delicate feature interactions that do not generalize.

With dropout, a unit cannot safely rely on the presence of particular companions, because those companions may be absent at the next step.

Therefore, the unit is pressured to learn features that remain useful across many random contexts.

Core regularizing effect

This is one of the core regularizing effects of dropout: it discourages a representation in which correctness depends on a narrow, fragile coalition of hidden units.

This viewpoint also clarifies the conceptual difference from L2 regularization.

- L2 regularization directly discourages large weights.

- Dropout directly discourages brittle dependence on specific hidden pathways.

Both can improve generalization, but they do so through different mechanisms.

6. Practical impact

The historical importance of dropout is difficult to overstate. The original JMLR paper reported strong improvements across a wide range of tasks, including:

- handwritten digit recognition,

- speech recognition,

- image classification,

- and other high-capacity neural-network settings where overfitting was severe.

Even when the absolute numerical gain on a benchmark appears modest, that gain can be extremely meaningful when it is achieved by a regularizer that is simple, architecture-agnostic, and broadly applicable.

When dropout is especially natural

Dropout is especially natural when a model has enough capacity to memorize the training set and one wants a simple way to inject stochastic robustness directly into the hidden representation.

Practical takeaway

Dropout is most compelling when model capacity is high, overfitting is a real risk, and one wants a regularizer that acts directly on the learned representation rather than only through an explicit penalty on the weights.

For these reasons, dropout became one of the canonical regularization techniques in deep learning.

7. Primary sources

- Geoffrey E. Hinton, Nitish Srivastava, Alex Krizhevsky, Ilya Sutskever, and Ruslan R. Salakhutdinov, Improving neural networks by preventing co-adaptation of feature detectors

- Nitish Srivastava, Geoffrey Hinton, Alex Krizhevsky, Ilya Sutskever, and Ruslan Salakhutdinov, Dropout: A Simple Way to Prevent Neural Networks from Overfitting

- Ian Goodfellow, Yoshua Bengio, and Aaron Courville, Deep Learning