1. Intro

Momentum is one of the most important extensions of plain gradient descent.

Its central purpose is simple:

- preserve part of the previous update direction;

- reduce wasteful oscillations in steep directions;

- accelerate progress when gradients remain consistently aligned across iterations.

Historically, the method is closely associated with Polyak’s heavy-ball method.

The underlying idea is that parameter updates should not be chosen only from the gradient observed at the current point, but should also retain a controlled amount of inertia from the recent past.

Important

Plain gradient descent is memoryless: each step depends only on the current gradient.

Momentum is not memoryless: each step depends on both the current gradient and the previous update history.

This single modification changes the optimization dynamics profoundly.

Scope of the theory

Momentum is often discussed together with Nesterov acceleration, but the two methods should not be conflated.

- classical momentum introduces inertia;

- Nesterov momentum introduces inertia plus a look-ahead correction.

Strong theoretical results do exist for classical momentum, especially on quadratics and in certain strongly convex regimes. However, unlike Nesterov’s classical convex-rate statement, the theory of classical momentum is more delicate and more problem-dependent. Its practical importance in deep learning is therefore best understood first through geometry and dynamics.

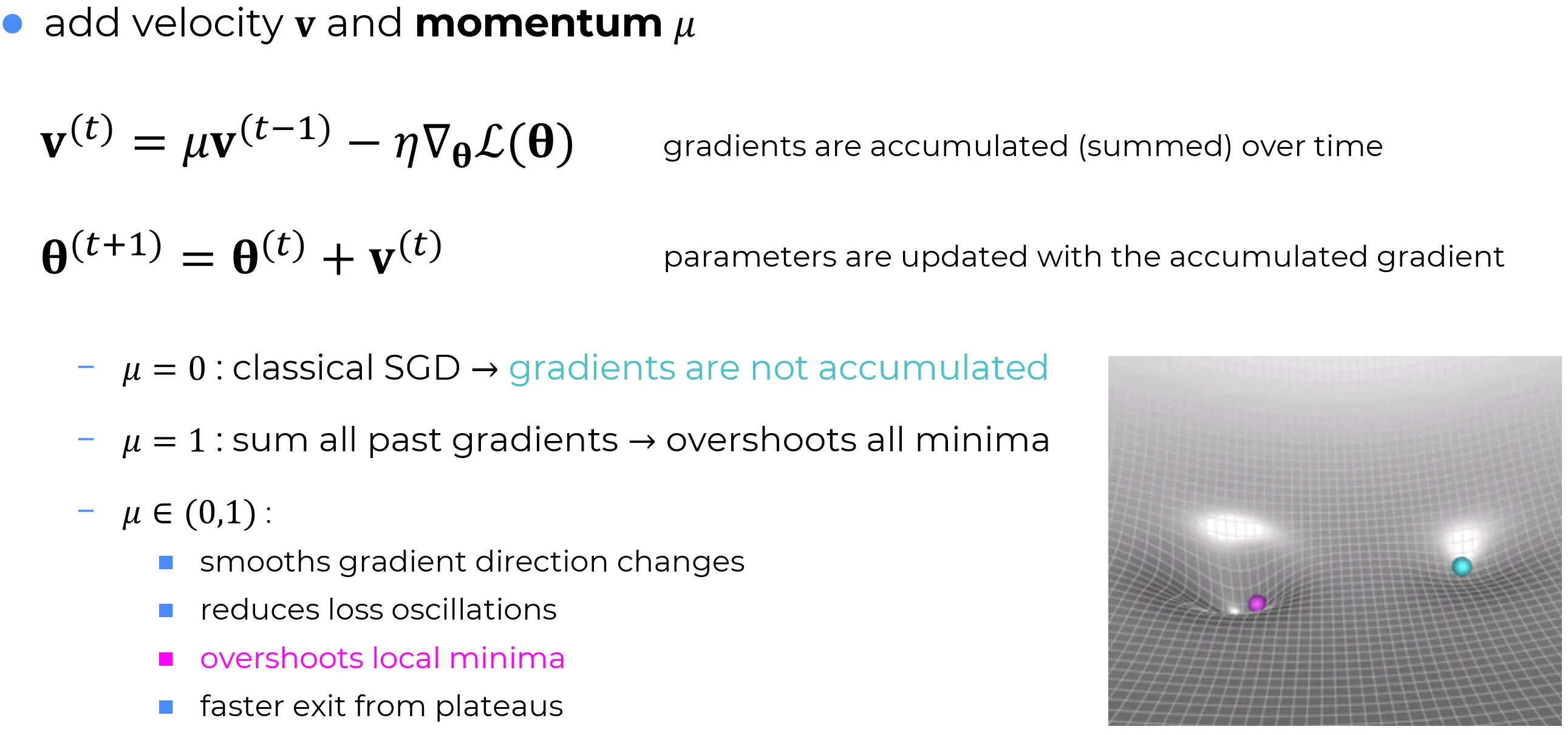

2. Momentum equations

Notation:

- : parameters at iteration ;

- : velocity / update vector;

- : learning rate;

- : momentum coefficient;

- : gradient at the current parameters.

The classical momentum update is

Thus, the new update is composed of:

- an inertial carry-over term ;

- a fresh gradient correction term .

It is useful to compare this directly with plain gradient descent:

| Method | Update rule | Memory of previous steps? | Gradient evaluation point |

|---|---|---|---|

| Gradient descent | No | current point | |

| Momentum | | Yes | current point |

Why the sign looks different from GD

The parameter update is written as

This does not mean that the method moves upward. The descent direction is already built into through the term

3. What inertia really means

Momentum is easiest to understand by unrolling the velocity recursion.

Starting from

repeated substitution gives

With the standard initialization ,

So the velocity is an exponentially weighted accumulation of recent gradients.

This immediately reveals the role of :

- if is small, the optimizer reacts mostly to the current gradient;

- if is large, the optimizer remembers a longer history of past directions.

Momentum is a directional low-pass filter

Recent gradients are not treated equally: the newest ones matter most, but older ones still contribute with weights . This tends to smooth high-frequency directional noise while preserving persistent directional trends.

This is why momentum is often helpful in stochastic training: mini-batch gradients can fluctuate strongly from step to step, while the velocity keeps a more stable aggregate direction.

4. Equivalent parameter-only form

Because

it follows that

Substituting this into the momentum update gives

This form is extremely informative.

It shows that momentum is plain gradient descent plus an additional displacement in the direction of the most recent motion.

Why the name "momentum" is reasonable

The term

carries part of the previous motion into the next step. This is why the method resembles an inertial process: the optimizer does not instantly forget where it was moving.

The analogy with mechanics is useful, but it should not be taken literally.

Momentum is not a faithful physical simulation of a rolling object.

It is an optimization rule deliberately designed to exploit directional persistence.

5. Geometric interpretation in ravines

General loss-landscape geometry, including saddle points and Hessian sign structure, is discussed in gradient descent.

The present section focuses on the locally convex ravine geometry that is most relevant for understanding why momentum helps.

Deep-learning loss landscapes are often highly anisotropic. In a local valley, curvature can be much steeper in one direction than in another.

In the locally convex valley subspace, the Hessian eigenvalues can differ by orders of magnitude:

This is the hallmark of an anisotropic valley or ravine.

If plain gradient descent is used in such a valley:

- gradients across the steep direction tend to alternate sign from one step to the next;

- gradients along the shallow direction tend to remain more consistently aligned.

This produces the familiar zig-zag behavior: progress across the valley is repeatedly reversed, while progress along the valley floor remains slow.

Momentum changes this picture in two simultaneous ways:

- in oscillatory steep directions, successive gradient contributions partially cancel inside the velocity accumulation;

- in persistent shallow directions, successive gradient contributions reinforce one another.

This is the practical heart of the method.

Important

Momentum does not magically remove curvature. What it does is reshape the trajectory so that repeated corrections in steep directions do less damage, while consistent descent directions accumulate more effectively.

6. One-dimensional quadratic model

The cleanest exact analysis is obtained from the scalar quadratic model

Then

and momentum becomes

Combining these two recursions gives the linear dynamical system

Its characteristic polynomial is

This already reveals several important facts.

Stability reading

Local asymptotic stability of this linear recursion requires both roots of the characteristic polynomial to lie inside the unit disk. The polynomial shows that:

- directly controls how much inertial memory is retained;

- couples learning rate with curvature;

- instability emerges when inertia and curvature-induced correction are too aggressive together.

This is the precise algebraic version of the usual practical warning: high momentum and high learning rate must be tuned jointly, not independently.

What the quadratic model teaches

In the scalar quadratic case:

- reduces exactly to plain gradient descent;

- introduces a second-order inertial mode;

- larger means stronger persistence of past motion;

- large curvature and large learning rate increase the risk of oscillation or instability.

The model therefore explains both the strength and the danger of momentum:

- too little inertia gives slow progress;

- too much inertia can produce ringing, overshoot, or divergence.

This is why momentum must always be interpreted jointly with the learning rate.

7. Multidimensional Hessian interpretation

The one-dimensional quadratic picture extends exactly along Hessian eigendirections.

Consider the local quadratic model

with symmetric Hessian . Then

and the momentum update is

If

and the dynamics are expressed in the eigenbasis of , each eigendirection decouples into a scalar system of the same form as the one-dimensional quadratic model, with

So each curvature direction behaves like its own inertial mode:

- steep direction: large , high risk of oscillation;

- flat direction: small , slow plain-GD progress, where momentum is especially useful.

Key geometric takeaway

Classical momentum uses the same inertial coefficient in every direction. Curvature enters only through the gradient term .

This is exactly why the method is powerful but imperfect: it introduces useful inertia, but it does not directly modulate inherited velocity by local curvature.

This observation is the natural bridge to Nesterov momentum. Nesterov keeps the same inertial idea, but evaluates the gradient at a look-ahead point, producing a more anticipatory correction.

8. Why momentum often helps in stochastic training

Note

In mini-batch stochastic gradient descent training, gradients are noisy. The gradient at iteration is not only a function of the local landscape, but also of sampling noise induced by the current batch.

Benefits of momentum

Momentum helps because the velocity integrates recent gradients over time:

- transient fluctuations are smoothed;

- consistent descent directions are amplified;

- optimization becomes less myopically tied to the current mini-batch.

This is one of the main reasons momentum remains so effective in deep learning even though its classical deterministic theory does not transfer verbatim to nonconvex stochastic training.

What momentum does not guarantee

Momentum can improve training dynamics dramatically, but it does not eliminate:

- sensitivity to the learning rate;

- bad conditioning;

- saddle geometry;

- instability under overly aggressive hyperparameters.

In practice, the pair must be tuned jointly. A large with a learning rate that is already near the stability boundary can be destabilizing.

9. Classical momentum versus Nesterov momentum

Since the next note studies Nesterov momentum in depth, it is useful to make the distinction precise already here.

| Component | Classical momentum | Nesterov momentum |

|---|---|---|

| Velocity update | ||

| Gradient evaluation point | current point | look-ahead point |

| Core behavior | inertial smoothing | inertial smoothing plus anticipatory correction |

Note

Classical momentum reacts to the slope at the current parameters. Nesterov momentum reacts to the slope at the anticipated future position. The present note therefore provides the base dynamics that the Nesterov note will refine.

10. Practical PyTorch recipe

import torch

optimizer = torch.optim.SGD(

model.parameters(),

lr=0.1,

momentum=0.9,

nesterov=False,

weight_decay=1e-4,

)Implementation note

In framework code, the internal momentum state may be stored as a buffer that accumulates gradient history. Depending on notation conventions, this buffer may be written either as a velocity-like quantity or as an unscaled accumulator. The mathematical idea is the same: a memory-bearing state is carried from one iteration to the next.

11. Final summary

Momentum should be remembered through one central idea:

Important

Momentum augments gradient descent with controlled inertia.

This inertia matters because it:

- accumulates persistent descent directions;

- reduces wasteful zig-zag motion in anisotropic valleys;

- smooths stochastic gradient fluctuations;

- often yields substantially faster practical optimization than plain gradient descent.

At the same time, momentum is not a free lunch. Its benefits come from altered dynamics, and altered dynamics can become unstable if learning rate and inertia are misaligned.

The correct mental model is therefore not “gradient descent with a small trick,” but rather:

- gradient descent: first-order, memoryless descent;

- momentum: first-order descent with an inertial state variable.

That inertial state is the conceptual foundation on top of which Nesterov momentum is built.

12. Primary references

- Boris T. Polyak, Some methods of speeding up the convergence of iteration methods (1964)

- Ilya Sutskever, James Martens, George Dahl, Geoffrey Hinton, On the importance of initialization and momentum in deep learning

- PyTorch documentation,

torch.optim.SGD: https://pytorch.org/docs/stable/generated/torch.optim.SGD.html - Ian Goodfellow, Yoshua Bengio, Aaron Courville, Deep Learning