Why this method is historically important

Nesterov momentum matters for two distinct reasons:

- practical dynamics: improved trajectory control compared with classical momentum;

- theory: accelerated convergence in smooth convex optimization.

For smooth convex objectives:

- gradient descent has rate ;

- Nesterov acceleration reaches .

This rate improvement is the historical core of Nesterov’s contribution.

Convex-function assumptions used below (notation-aligned)

The classical assumptions are:

- Convexity:

- -strong convexity, when needed:

- -smoothness / Lipschitz gradient:

Why for GD and for Nesterov? (compact sketch)

Consider convex and -smooth , with gradient descent:

By smoothness:

By convexity at :

Expanding the distance recursion:

Combining and telescoping gives:

hence .

For Nesterov acceleration, estimate-sequence / potential arguments yield:

hence .

Scope of the rate claim

The statement belongs to deterministic smooth convex optimization. Deep-learning training is usually stochastic and nonconvex, so this theorem is not transferred directly.

2. NAG equations

Notation:

- : parameters at iteration ;

- : velocity/update vector;

- : learning rate;

- : momentum coefficient.

What inertia means in momentum methods

Inertia is persistence of update direction across iterations. In

the term carries part of the previous update into the current step.

Unrolling the recurrence:

With , velocity is an exponentially weighted accumulation of recent gradients.

- larger : longer memory and stronger inertial carry-over;

- smaller : shorter memory and more reactive updates.

NAG keeps the same inertial mechanism; the difference is where the gradient is evaluated (look-ahead point instead of current point).

| Component | Classical momentum | Nesterov momentum |

|---|---|---|

| Velocity update | ||

| Parameter update | ||

| Gradient evaluation point | ||

| Intuition | reactive correction at current location | anticipatory correction at look-ahead location |

Equivalent predictor-corrector form

Define

Then NAG can be written as:

which makes the predict-then-correct mechanism explicit.

Concise intuition: classical momentum reacts after displacement, while Nesterov momentum evaluates the slope at the anticipated position and corrects during displacement formation.

Why this can help even in nonconvex stochastic training

Strict convex acceleration guarantees may not apply. Nevertheless, earlier directional correction often improves practical stability:

- less delay between inertial drift and gradient feedback;

- better damping in stiff directions;

- cleaner behavior under aggressive learning-rate schedules.

3. Geometric refinement over classical momentum

A full explanation of Nesterov look-ahead behavior requires moving beyond the gradient and considering local curvature through the Hessian matrix .

General loss-landscape geometry, including saddle points, is discussed in gradient descent.

The ravine geometry in which inertia becomes especially useful is developed in momentum.

The present note isolates what is specific to Nesterov momentum. Once the optimization trajectory is already understood as moving through an anisotropic valley, NAG modifies the dynamics by evaluating the gradient at a look-ahead point rather than at the current parameters.

This means that curvature influences the correction earlier in the step construction. The practical effect is often a shorter cross-valley excursion and a more anticipatory damping of inertial overshoot than in classical momentum.

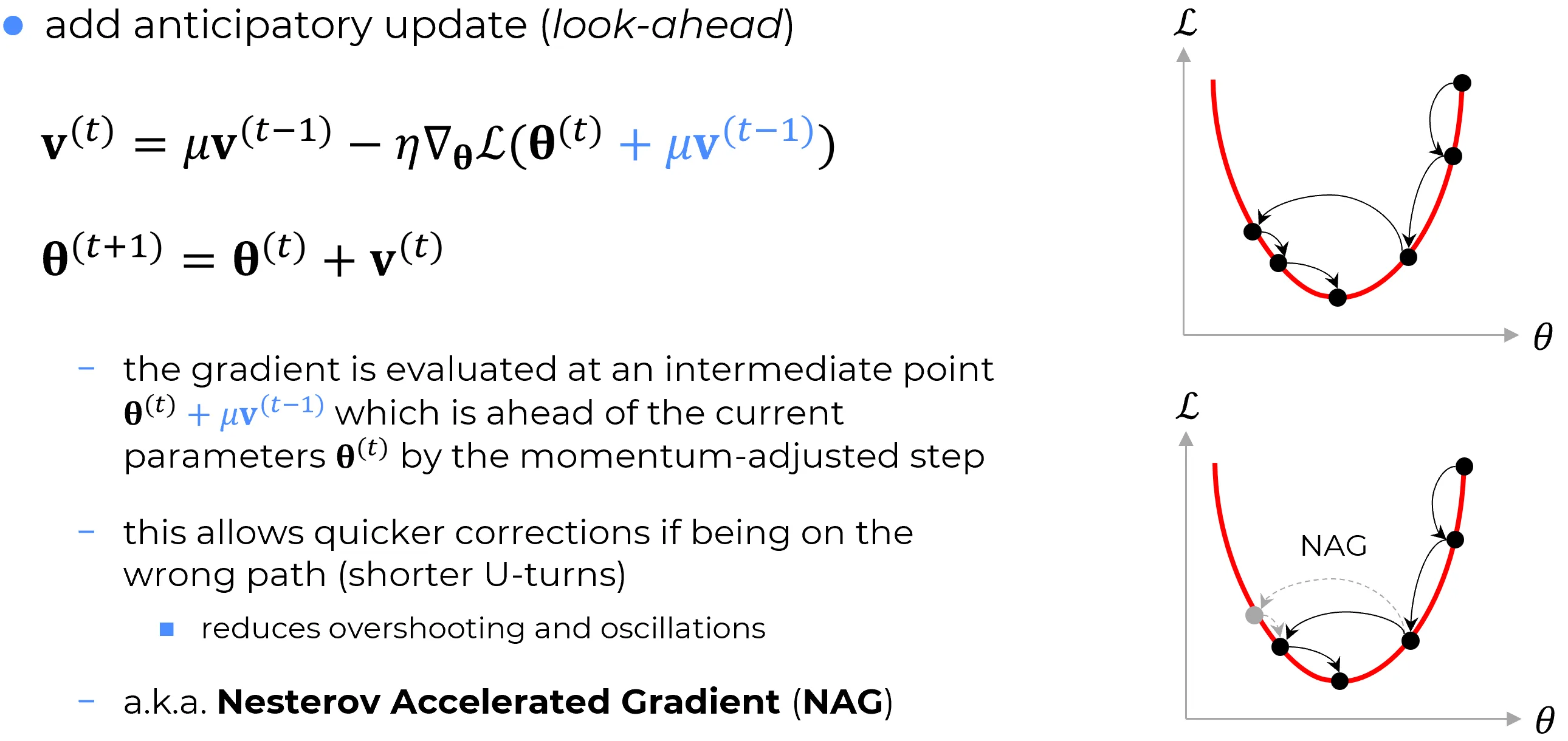

How to read the figure

The upper panel illustrates a longer oscillatory trajectory associated with classical momentum. The lower panel illustrates NAG: the gray look-ahead marker indicates where the corrective gradient is queried before the update is finalized. The visual message is geometric and dynamical, not merely algebraic notation.

4. 1D quadratic case: NAG damping made explicit

Section objective

The goal of this section is to isolate the NAG mechanism in the simplest setting where every step is explicit:

- show exactly where curvature enters the momentum channel;

- explain why steep curvature requires stronger damping;

- prepare the multidimensional interpretation, where each Hessian eigendirection behaves like this same scalar mode with .

Consider the one-dimensional quadratic model

Then , so NAG gives

Classical momentum is

So the structural difference is exact:

- classical momentum carries inertia with factor ;

- NAG carries inertia with factor .

Interpretation

- the inherited-velocity contribution is exactly the term ;

- if is small, then , so the multiplier stays close to and more of the previous velocity is preserved (more inertial memory);

- if is large, then moves away from and inherited velocity is attenuated more strongly (and may even flip sign when ).

Including the parameter update , the linear homogeneous dynamics can be written as

How to read the stability condition

This is a linear time-discrete dynamical system of the form with

Local asymptotic stability means that trajectories converge to zero, which for linear discrete systems is equivalent to all eigenvalues of lying inside the unit disk:

Here is the spectral radius, i.e. . The scalar inequality checks only the coefficient multiplying in the update, so it is an intuition cue, not a full stability test. Practical takeaway: when curvature increases, stable learning-rate range typically shrinks.

5. Extended Hessian analysis

The multidimensional picture is the exact extension of the 1D template above: each Hessian eigendirection behaves like a scalar mode with .

Assume is twice differentiable near , with local Hessian . Taylor expansion around gives:

Why is symmetric, and why eigenvalues are real

For , mixed second derivatives are continuous. By Schwarz-Clairaut:

hence , so is real symmetric. A real symmetric matrix has:

- real eigenvalues;

- an orthonormal eigenbasis.

This is exactly the hypothesis of the spectral theorem used below.

Taylor remainder (what is hidden by )

The first-order expansion of the gradient is

Here . So the approximation is accurate when the look-ahead displacement is locally small and Hessian variation over that displacement is limited.

Substituting into NAG:

Therefore:

The extra curvature-dependent term is:

It modulates inherited inertia before the update is finalized. This is the formal mechanism behind look-ahead damping.

Deep dive: eigen-direction decomposition (and why it is valid)

The decomposition

is not an arbitrary assumption. By the spectral theorem, every real symmetric matrix is orthogonally diagonalizable:

- has orthonormal eigenvectors;

- contains real eigenvalues.

Minimal proof sketch for real eigenvalues: if with , then

and the right-hand side is real because is real symmetric.

Any vector admits expansion on this orthonormal basis:

Applying the NAG attenuation operator:

Hence:

Interpretation of each factor:

- : inherited velocity component in eigendirection ;

- : direction-specific gain set by local curvature.

Components aligned with large positive (steep directions) are attenuated more strongly. This is the linear-algebraic origin of steep-direction damping.

Intuition after the algebra

Each eigendirection behaves like an independent one-dimensional mode. The factor acts as a mode-specific brake:

- steep mode ( large): aggressive braking;

- flat mode ( small): mild braking.

NAG therefore reshapes inertia by geometry, not by a single global scalar.

Deep dive: hardware reality (why is usually infeasible)

Newton’s method would use

For a model with about million parameters (ResNet-50 scale), the dense Hessian size is

In float32, storage alone is about

Direct inversion scales as , which is computationally prohibitive per training step. This is why deep learning relies on first-order optimizers. NAG is an engineering compromise: first-order cost with implicit second-order curvature behavior.

6. Conceptual look-ahead vs PyTorch implementation

The canonical NAG equation explicitly uses

torch.optim.SGD implements Nesterov through a momentum-buffer state computed from current-parameter gradients:

- (plus optional weight decay);

- buffer update

- Nesterov direction: ;

- parameter step: .

What the buffer actually stores

The buffer stores gradient history, not parameter history. With :

Expanding the first steps:

So each new buffer is a weighted sum of recent gradients, with geometric decay by powers of . This weighted accumulation is exactly what “momentum memory” means.

Where PyTorch computes the gradient in Nesterov mode

Canonical NAG writes

i.e. gradient at a shifted point. PyTorch does not run an explicit second forward/backward at that shifted point. It computes one gradient at current parameters,

then builds the Nesterov direction algebraically:

So the look-ahead effect is implemented through direction construction, not through an explicit extra gradient query.

7. Sutskever’s step notation and its relation to PyTorch

Historical note

A very influential deep-learning presentation of momentum is the one used in Sutskever, Martens, Dahl, and Hinton (2013), On the importance of initialization and momentum in deep learning. In that notation, the state variable is the parameter step itself, not an unscaled momentum buffer.

Since many references still use the Sutskever convention, without making that convention explicit, PyTorch formulas can look different even when they describe the same underlying dynamics.

Sutskever-style notation writes the state as the step applied to the parameters:

Interpretation:

- already includes learning-rate scaling;

- the parameter update is simply “subtract the step.”

PyTorch-style notation instead stores an unscaled momentum buffer:

Here is the same object denoted by in Section 6.

The real difference

The two formulas do not introduce two different optimizers. They define the internal state differently:

- Sutskever: state = already-scaled step;

- PyTorch: state = unscaled gradient accumulator.

If learning rate is constant, the mapping is immediate.

Define

Then

and

So under constant learning rate, the Sutskever formula is just the PyTorch formula written in a different state variable.

Why schedules break the exact equivalence

If learning rate changes over time, define . Then

The extra ratio means the momentum memory is rescaled when the schedule changes. This is why the two conventions can have different transient behavior under learning-rate schedules even if they coincide when learning rate is constant.

Nesterov variants follow the same logic.

Summary

The important point is not “which formula is correct,” but “what quantity the state variable is supposed to represent.”

8. Practical PyTorch recipe

import torch

optimizer = torch.optim.SGD(

model.parameters(),

lr=0.1,

momentum=0.9,

nesterov=True,

weight_decay=1e-4,

dampening=0.0,

)Note

In PyTorch,

nesterov=Truerequiresmomentum > 0anddampening = 0.

9. Final summary

Nesterov momentum is most robustly understood through three coordinated layers:

- conceptual: look-ahead gradient correction;

- geometric: curvature-shaped damping through ;

- engineering: efficient first-order implementation used in modern frameworks.

This explains why NAG remains relevant: it preserves first-order scalability while embedding useful curvature-aware behavior.

10. Primary references

- Yurii Nesterov, A method for solving the convex programming problem with convergence rate (1983)

- Ilya Sutskever, James Martens, George Dahl, Geoffrey Hinton, On the importance of initialization and momentum in deep learning

- PyTorch documentation,

torch.optim.SGD: https://pytorch.org/docs/stable/generated/torch.optim.SGD.html - Ian Goodfellow, Yoshua Bengio, Aaron Courville, Deep Learning