From Perceptrons to Long-Term Memory

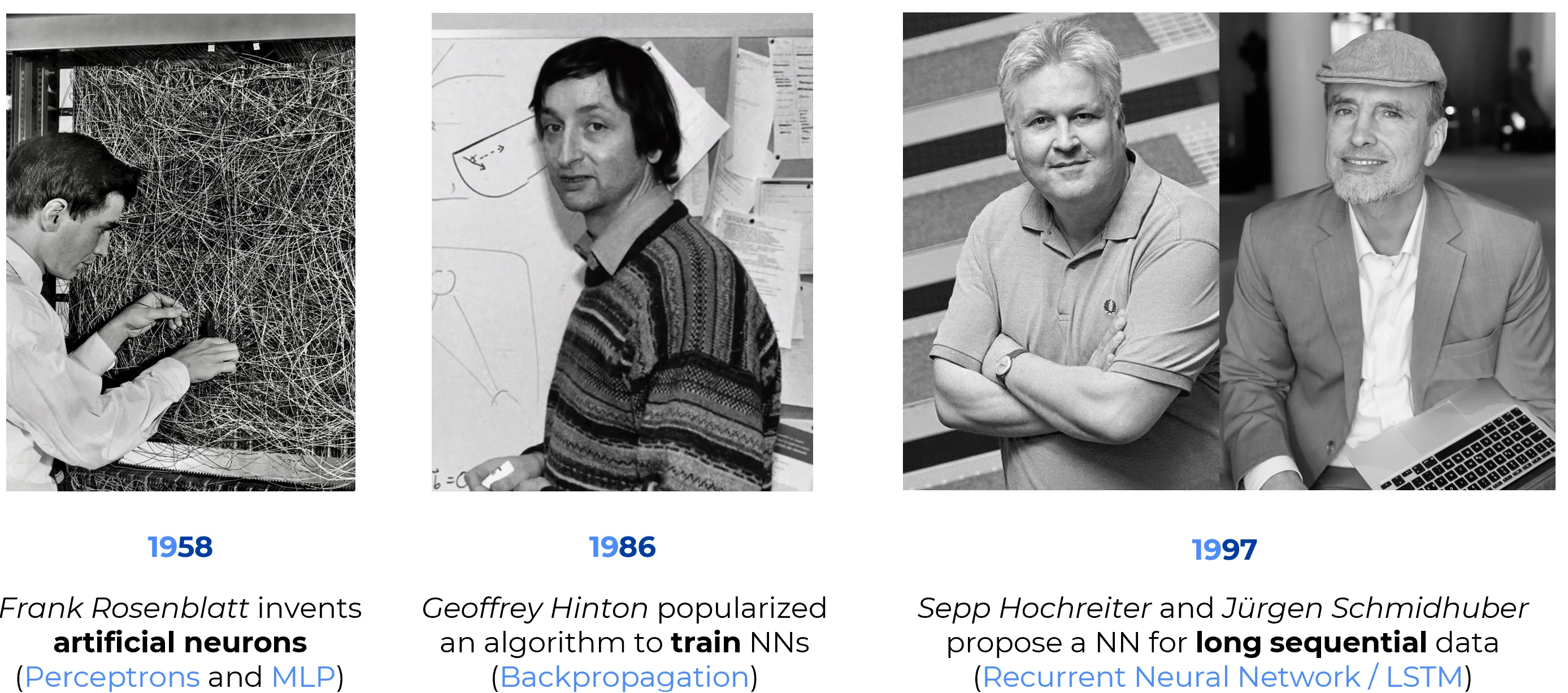

The history of deep learning is not best understood as a smooth, linear progression. It is more accurately described as a sequence of conceptual breakthroughs, each of which addressed a specific mathematical or computational bottleneck. A selective understanding of these milestones helps clarify how the field moved from simple linear classifiers to trainable multi-layer networks and, eventually, to architectures capable of handling long-range temporal dependencies.

Abstract

This is a selective historical trajectory, not a complete history of neural networks. It focuses on three pivotal milestones: the Perceptron, Backpropagation, and LSTM. Other major developments, such as CNNs, attention, and Transformers, are treated elsewhere.

1958 – Frank Rosenblatt and the Perceptron

| Attribute | Description |

|---|---|

| Historical Context | In the late 1950s, within the broader intellectual climate of cybernetics and early pattern recognition, Frank Rosenblatt developed the Perceptron at the Cornell Aeronautical Laboratory. The goal was not only engineering-oriented classification, but also a computational model inspired by biological perception and learning. |

| Core Concept | The Perceptron is a linear threshold unit: it computes a weighted sum of the input, adds a bias term, and applies a threshold to produce a binary decision. It is one of the earliest influential models of a trainable artificial neuron. |

| Innovation | Its significance lies in showing that the parameters of a classifier could be updated directly from examples. In modern terms, this was an early and highly influential form of supervised learning for neural systems. Rosenblatt also demonstrated hardware feasibility through the Mark I Perceptron, an electromechanical implementation connected to a visual input device. |

| Known Limits | A single-layer Perceptron can represent only linearly separable decision boundaries. This limitation became central to later criticism, especially after Minsky and Papert’s Perceptrons (1969), which analyzed the restricted expressive power of single-layer architectures. Their critique did not single-handedly cause the AI winter, but it significantly reinforced skepticism toward connectionist methods. |

| Impact | The Perceptron established the basic conceptual template of trainable neural classification: weighted inputs, adjustable parameters, and learning from data. It became a foundational reference point for later connectionist models. |

Historical Anecdote: Early Hype

Public reactions to the Perceptron were extraordinarily ambitious. Contemporary press coverage framed it as a machine that might eventually acquire human-like perceptual and cognitive abilities. This rhetoric contributed to a cycle that would later become familiar in AI history: overstatement, disappointment, and backlash. The Perceptron was scientifically important, but early public expectations greatly exceeded its actual mathematical capabilities.

1986 – The Popularization of Backpropagation

| Attribute | Description |

|---|---|

| Problem Solved | Training multi-layer neural networks was difficult because learning signals had to be assigned not only to the output layer, but also to hidden layers whose contribution to the final error was indirect. A practical method was needed to compute these gradients efficiently. |

| Core Idea | Backpropagation applies the chain rule of calculus systematically through a composed differentiable model. In modern language, it computes derivatives through a computational graph by propagating error signals from the output back toward earlier layers. |

| Algorithmic Structure | 1. Forward pass: compute activations and the loss function. 2. Backward pass: compute partial derivatives of the loss with respect to intermediate activations and parameters. 3. Parameter update: use gradient-based optimization to reduce the loss. |

| Practical Result | Backpropagation made it feasible to train multi-layer perceptrons with hidden units that could learn internal feature representations rather than relying only on manually engineered features. This was a decisive step beyond the limitations of the single-layer Perceptron. |

| Methodological Shift | The broader consequence was the emergence of the differentiable paradigm: model design became increasingly tied to end-to-end gradient-based optimization. This paradigm later supported CNNs, sequence models, embeddings, and most of modern deep learning. |

| Impact | The 1986 work of Rumelhart, Hinton, and Williams did not invent the mathematics from scratch, but it played a decisive role in showing that gradient-based learning could train hidden representations effectively and could therefore revive neural network research at scale. |

Historical Nuance: Popularization, Not Absolute Origin

The 1986 paper is rightly famous, but it should be described as the popularization and practical demonstration of backpropagation for neural networks, not as the sole origin of the underlying mathematics.

Important precursors include:

- Seppo Linnainmaa (1970), who described reverse-mode automatic differentiation

- Paul Werbos (1974), who proposed applying these ideas to neural networks

The 1986 contribution was pivotal because it demonstrated, in a clear and influential way, that hidden layers could learn useful internal representations through gradient propagation.

1997 – Hochreiter & Schmidhuber and Long Short-Term Memory (LSTM)

| Attribute | Description |

|---|---|

| Challenge | Standard Recurrent Neural Networks (RNNs) struggled to learn long-range temporal dependencies because gradients tended to vanish or explode as they were propagated through many time steps. This made long-term credit assignment extremely difficult. |

| Original 1997 Solution | Long Short-Term Memory (LSTM) introduced a memory cell architecture specifically designed to preserve useful error signals across long temporal intervals. The key idea was to create a path through time along which information and gradients could persist more effectively. |

| Technical Innovation | The original LSTM architecture introduced the cell state, input and output gating, and the Constant Error Carousel (CEC), a mechanism that helps maintain error flow over time. Its additive memory dynamics made optimization far more stable than in standard recurrent networks. |

| What It Changed | LSTM made sequence learning substantially more practical in tasks where distant past information mattered, including speech recognition, language modeling, handwriting recognition, and machine translation. |

| Legacy | LSTM became the dominant recurrent architecture for sequence modeling for many years. It directly influenced later gated recurrent architectures such as the GRU, and it helped establish the broader principle that trainable memory mechanisms are central to intelligent sequence processing. |

Historical Precision: The Forget Gate Came Later

A common simplification is to describe the 1997 LSTM as if it already contained the modern trio of input, forget, and output gates. This is not strictly correct.

The original 1997 LSTM paper by Hochreiter and Schmidhuber introduced the memory cell, input gating, output gating, and the Constant Error Carousel. The now-standard forget gate was added later by Gers, Schmidhuber, and Cummins (2000) to allow the network to learn when to reset internal state during continual input streams.

Historical Anecdote: Slow Recognition

The core ideas behind LSTM trace back to Sepp Hochreiter’s 1991 diploma thesis. As often happens in AI, the importance of the method was not fully appreciated immediately. Over time, however, LSTMs became the standard recurrent architecture in industrial and academic sequence modeling before the rise of Transformer-based systems.

Logical Progression

- The Perceptron introduced the idea of a trainable artificial neuron and supervised adjustment of parameters from labeled examples.

- Backpropagation made it practical to optimize multi-layer differentiable networks and thereby learn internal representations.

- LSTM extended trainable neural computation into the temporal domain by stabilizing long-range credit assignment in recurrent models.

The Evolution of Bottlenecks

Each milestone addressed a distinct limitation:

Linear Classification

Trainable Hidden Layers

Long-Term Temporal Credit Assignment

Summary Table

| Year | Milestone | Why It Matters |

|---|---|---|

| 1958 | Perceptron | Established the trainable linear threshold unit and the paradigm of learning from examples. |

| 1986 | Backpropagation | Made multi-layer neural networks practically trainable through gradient-based optimization. |

| 1997 | LSTM | Enabled recurrent models to learn long-range dependencies far more effectively than standard RNNs. |

Key Take-away

The major advances in neural networks did not arise from vague increases in complexity, but from targeted solutions to concrete bottlenecks.

- The Perceptron addressed learnable classification.

- Backpropagation addressed optimization in layered networks.

- LSTM addressed memory and gradient stability over time.

Together, these milestones provide the conceptual foundation for understanding later architectures, which build on the same recurring themes: parameterized neurons, gradient-based learning, and structured mechanisms for retaining relevant information.

Selected Sources

- Rumelhart, Hinton, Williams, Learning representations by back-propagating errors (Nature, 1986)

- Hochreiter, Schmidhuber, Long Short-Term Memory (Neural Computation, 1997)

- Gers, Schmidhuber, Cummins, Learning to Forget: Continual Prediction with LSTM (Neural Computation, 2000)

- Smithsonian, Electronic Neural Network, Mark I Perceptron

- MIT Press, Perceptrons by Minsky and Papert (1969)

- Cornell Chronicle, Professor’s perceptron paved the way for AI – 60 years too soon (2019)