The Evolution of Attention and the Transformer Revolution

The transition from recurrent sequence models to Transformer-based large language models marks one of the most consequential architectural shifts in modern artificial intelligence. This shift did not happen in a single step. It emerged through a sequence of breakthroughs that removed specific bottlenecks: first the fixed-context bottleneck in encoder-decoder RNNs, then the sequential computation bottleneck of recurrence itself, and finally the challenge of turning powerful language models into systems that non-specialists could actually use.

Section Goal

This section traces three decisive milestones in the rise of modern language modeling:

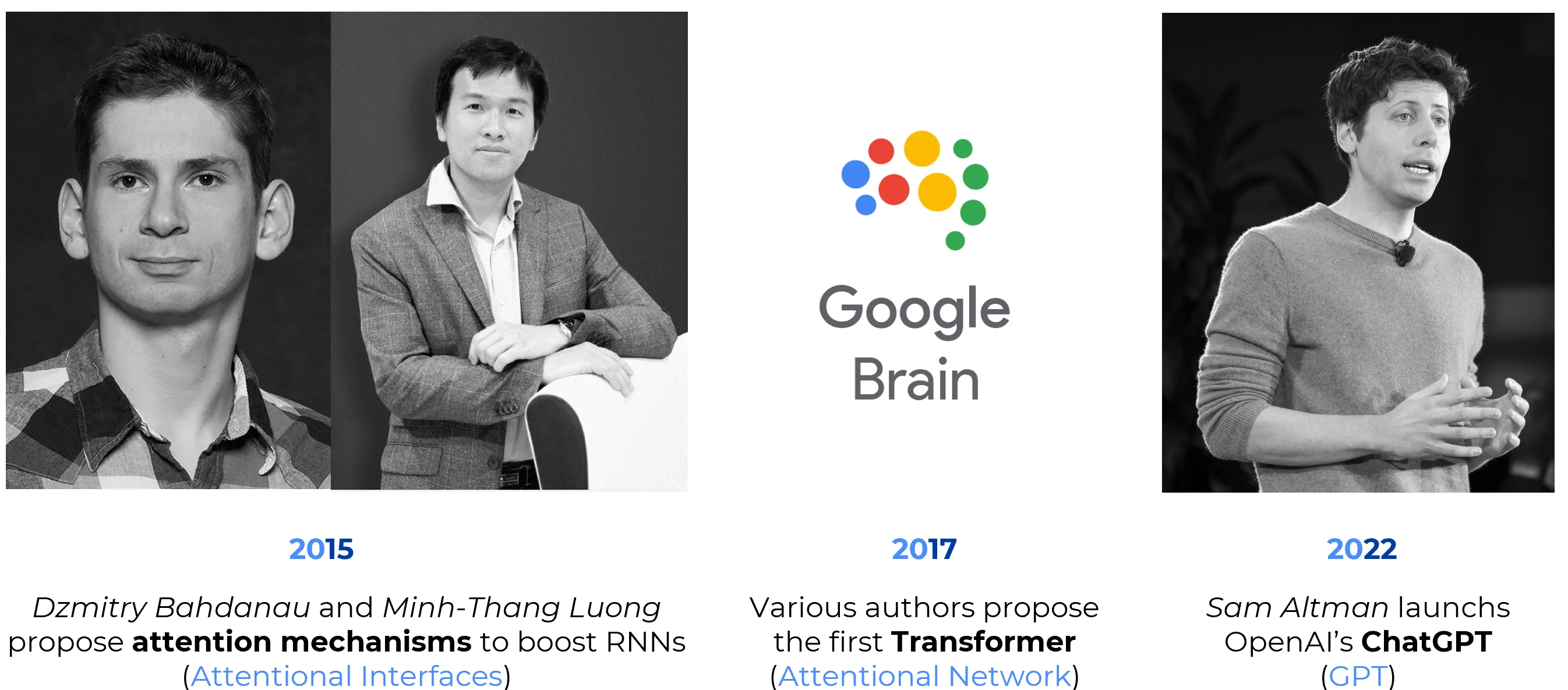

Year Researchers / Organization Milestone Why It Matters 2014-2015 Bahdanau, Cho, Bengio; Luong, Pham, Manning Attention in Neural Machine Translation Replaces the fixed-length context vector with dynamic token-level alignment 2017 Vaswani et al. The Transformer Eliminates recurrence and makes sequence modeling far more parallelizable 2022 OpenAI ChatGPT Brings Transformer-based language models into mainstream public use

Note

This is a selective architectural history. It focuses on the path from attention in sequence-to-sequence models to Transformer-based language systems. It does not attempt to cover the full history of NLP, the entire GPT lineage, or the whole ecosystem of foundation models.

Historical Timeline: From Alignment to Ubiquity

| Year | Milestone | Technical Significance |

|---|---|---|

| 2014-2015 | Attention in RNN-based Seq2Seq models | Bahdanau, Cho, and Bengio introduced a mechanism that allowed the decoder to compute a dynamic context vector at each output step instead of relying on a single fixed-length encoding of the source sentence. Luong, Pham, and Manning later refined and systematized several attention variants for neural machine translation. |

| 2017 | The Transformer | Vaswani et al. introduced an attention-based encoder-decoder architecture that removed recurrence and convolution from the core sequence-transduction pipeline. The model combined self-attention, encoder-decoder attention, feed-forward layers, positional encodings, residual connections, and layer normalization. |

| 2022 | ChatGPT | OpenAI turned large Transformer-based language models into a mass-adoption interface through a conversational product. The novelty was not a new network family, but the combination of pretrained language modeling, instruction tuning, and reinforcement learning from human feedback into a broadly usable system. |

2014-2015 - Attention in Neural Machine Translation

| Aspect | Details |

|---|---|

| Historical Problem | Early sequence-to-sequence RNNs attempted to compress the entire input sequence into a single fixed-length vector before decoding. This created a severe bottleneck, especially for long or information-dense sentences. |

| Core Idea | Attention allows the decoder to compute a different context vector at each output step, assigning higher weight to the most relevant input positions for the token currently being generated. |

| What Changed | Instead of forcing all source information into one vector, the model learned a form of soft alignment between source and target tokens. This made translation more accurate and more interpretable. |

| Scientific Impact | Attention became one of the central ideas in modern deep learning because it showed that selective access to internal representations could outperform uniform compression. |

| Historical Importance | This was the conceptual bridge between recurrent encoder-decoder models and the later Transformer family. |

Why Attention Was a Breakthrough

Attention solved a real architectural bottleneck: the decoder no longer had to rely on a single summary of the entire source sentence. Instead, it could retrieve relevant information dynamically during generation.

In practical terms, this improved translation quality, made long sequences easier to handle, and introduced a new view of neural computation as content-based retrieval rather than passive compression.

Historical Precision

It is common to label this milestone simply as “2015 attention,” but the chronology is slightly more precise:

- Bahdanau, Cho, and Bengio introduced the influential alignment-based mechanism in 2014 (published at ICLR 2015).

- Luong, Pham, and Manning extended and compared attention variants in 2015.

A Conceptual Reframing

Attention can be interpreted as an early form of differentiable memory addressing: the model does not merely store information in a hidden state, but learns how to retrieve the most relevant part of that stored information when needed.

2017 - The Transformer

| Aspect | Details |

|---|---|

| Historical Problem | Even with attention, RNN-based models still processed sequences step by step. This limited parallelism, increased training time, and made long-range dependency modeling harder than necessary. |

| Core Idea | The Transformer replaced recurrent state transitions with attention-based sequence modeling. Every token could directly attend to every other relevant token within the same layer. |

| Architecture | The canonical Transformer introduced in Attention Is All You Need combines multi-head self-attention, encoder-decoder attention, position-wise feed-forward networks, positional encodings, residual pathways, and layer normalization. |

| Why It Scaled | Since tokens can be processed in parallel during training, the architecture aligns much better with modern accelerators such as GPUs and TPUs than standard recurrent models do. |

| Results | The original paper reported state-of-the-art translation results on WMT 2014 English-to-German and English-to-French, while requiring less training cost than earlier recurrent systems. |

| Historical Importance | The Transformer became the foundational architecture behind modern LLMs and later spread far beyond language into vision, audio, biology, robotics, and multimodal learning. |

The Real Significance of the Transformer

The Transformer was not merely “an attention model.” Its deeper importance was that it redefined sequence modeling around parallelizable token-to-token interactions, making large-scale training dramatically more practical.

Common Oversimplification

It is inaccurate to say that the Transformer consists only of “self-attention plus feed-forward layers.”

The original architecture also includes:

- encoder-decoder attention

- positional encodings

- residual connections

- layer normalization

What the paper demonstrated is more specific: recurrence and convolution were no longer necessary as the core sequence-processing mechanism.

A Small but Famous Technical Curiosity

By current standards, the original Transformer was remarkably small.

- Transformer Base: about 65 million parameters

- Transformer Big: about 213 million parameters

Yet those models were enough to trigger one of the largest architectural realignments in AI history.

Why Self-Attention Changed the Geometry of Sequence Modeling

In recurrent models, information from distant tokens must propagate through many sequential steps. In self-attention, tokens can interact much more directly. This shortens the effective path between distant positions and makes it easier to model long-range dependencies.

After 2017 - The Great Branching of the Transformer Family

The original Transformer was an encoder-decoder architecture designed for sequence transduction. But once the attention-based design proved effective, the field rapidly split it into several powerful lineages.

| Transformer Lineage | Representative Models | Typical Use |

|---|---|---|

| Encoder-only | BERT, RoBERTa | Language understanding, classification, retrieval |

| Decoder-only | GPT family | Autoregressive generation, in-context learning, LLM chat systems |

| Encoder-decoder | T5, BART | Translation, summarization, structured sequence transformation |

This branching is historically important. The Transformer did not simply become one model. It became a general architectural template from which several cultures of language modeling emerged.

A Key Historical Consequence

The period after 2017 should not be understood merely as “bigger Transformers.” It was also the period in which the field discovered that different tasks favor different slices of the original architecture:

- encoder-only models for deep bidirectional understanding

- decoder-only models for generative continuation

- encoder-decoder models for controlled input-output transformation

Why This Matters for Modern AI

Many public discussions flatten everything into “ChatGPT-style models.” In reality, the Transformer revolution created an entire family tree, not a single species.

2018-2021 | The Silent Revolution: Scaling Laws and Structural Stability

Between the invention of the Transformer and the explosion of ChatGPT, the field discovered that “scaling up” was not just a matter of adding more GPUs, but a precise science of optimization and mathematical laws.

| Aspect | Details |

|---|---|

| The Scaling Laws (2020) | Researchers at OpenAI (Kaplan et al.) and DeepMind discovered that the cross-entropy loss of a Transformer follows a predictable Power Law relative to compute (), dataset size (), and parameter count (). |

| The Formula | Performance improves as , where is a scaling coefficient. This meant that model performance was no longer a guessing game, but a predictable engineering target. |

| From Post-Norm to Pre-Norm | The original 2017 Transformer used “Post-Norm” (LayerNorm after the residual add). This caused unstable gradients at scale. Switching to Pre-Norm, , allowed gradients to flow more safely, making it possible to train models with hundreds of layers without divergence. |

| In-Context Learning (GPT-3) | In 2020, it was discovered that at a certain scale (175B parameters), models stop being just “predictors” and start being “learners” inside the prompt. This emerged without explicit training for specific tasks. |

Why the Pre-Norm Shift was Critical

If the original Transformer was a car, the shift to Pre-Norm was the invention of a stable cooling system. In a Post-Norm architecture, the identity path is slightly corrupted at every layer, leading to vanishing or exploding gradients as depth increases. In Pre-Norm, the “identity highway” () remains cleaner throughout the network, which is the only reason we can now train models with 100+ billion parameters without the training process collapsing in the first week.

Technical Insight

Most modern LLMs (Llama, GPT-4, Claude) use a variation of Pre-Norm (often with RMSNorm instead of standard LayerNorm) to ensure training stability at the scale of trillions of tokens.

2022 - ChatGPT and Mass Adoption

| Aspect | Details |

|---|---|

| Historical Problem | By the early 2020s, large pretrained language models were already powerful, but they remained difficult for non-specialists to use effectively. There was still a gap between benchmark performance and widespread public utility. |

| Product Shift | ChatGPT transformed the interaction model by exposing a large language model through a conversational interface. This made prompting, iteration, and task delegation accessible to the general public. |

| Technical Stack | The significance of ChatGPT lies not in the invention of a new architecture, but in the combination of Transformer-based pretraining, instruction-following behavior, and reinforcement learning from human feedback (RLHF). |

| Adoption Impact | ChatGPT became the fastest-adopted consumer technology in history, reaching 1 million users in five days and 100 million users in two months, according to OpenAI. |

| Historical Importance | ChatGPT marked the moment when the Transformer ceased to be only an academic and industrial architecture and became a general-purpose public interface for knowledge work, writing, coding, tutoring, and everyday problem solving. |

Why 2022 Matters

ChatGPT did not invent the Transformer, and it did not mark the first capable large language model. Its importance was different: it converted a technical capability into a globally legible product form.

Architectural vs. Product Milestone

The years 2014-2015 and 2017 represent architectural breakthroughs. The year 2022 represents a deployment and adoption breakthrough.

This distinction matters. Not every turning point in AI history is a new model class; some are new ways of packaging and aligning an existing model family for human use.

A Deeper Interpretation

ChatGPT changed more than product adoption. It changed what the public thought a computer interface could be. For decades, software exposed functionality through menus, forms, and commands. ChatGPT suggested that a large part of software could instead be mediated by natural language interaction.

Additional Technical Considerations

Positional Encoding: The Price of Removing Recurrence

A recurrent model carries order implicitly through time: token 7 comes after token 6 because the computation itself is sequential. Once recurrence is removed, order must be reintroduced explicitly. This is why the original Transformer added positional encodings to token embeddings.

Curiosity: Positional Encoding as an Architectural Confession

Positional encoding is a subtle but profound detail. It reveals that self-attention alone is content-aware but order-agnostic.

In other words, the Transformer needed to be told where tokens are, because unlike an RNN, it has no built-in notion of temporal order.

The original paper used sinusoidal positional encodings, partly because they might allow extrapolation to sequence lengths longer than those seen during training. Later models explored learned positional embeddings, relative position schemes, rotary embeddings, and other alternatives.

Multi-Head Attention: One Sequence, Many Relational Views

The Transformer does not compute a single attention map. It computes several in parallel through multi-head attention. Each head projects the input into a different subspace, allowing the model to capture different kinds of relationships at once.

This is one of the reasons the architecture is so expressive. Some heads may focus on local syntactic relations, others on long-range dependencies, others on delimiter structure, and others on broader semantic alignment.

Intuition

Multi-head attention can be thought of as giving the model several parallel relational lenses over the same sequence rather than forcing all dependencies into one shared notion of similarity.

The Hidden Cost of Attention

The success of the Transformer did not eliminate tradeoffs. Standard self-attention has quadratic complexity in sequence length, because every token attends to every other token. This becomes expensive for long documents, long-context reasoning, code repositories, video, and multimodal inputs.

The Price of Full Connectivity

The same mechanism that makes self-attention powerful also makes it expensive:

- more tokens mean more pairwise interactions

- more pairwise interactions mean more memory and compute

Much of post-2017 research can be read as an attempt to preserve the benefits of attention while reducing its computational cost.

Inference Optimization: The KV Cache

While self-attention is during training, generating tokens one-by-one (inference) would be impossibly slow if we recomputed the entire sequence for every new word.

- The Mechanism: To avoid redundant calculations, we store the Keys (K) and Values (V) of all previous tokens in memory.

- The Benefit: For each new token, we only compute its Query (Q) and dot it against the cached K and V. This turns the per-token inference cost from into .

- The Tradeoff: This creates the “KV Cache Bottleneck”: models don’t just need compute (FLOPs), they need massive amounts of VRAM to store these caches, especially for long-context windows (e.g., 128k+ tokens).

This pressure led to a large family of efficiency-oriented ideas: sparse attention, linearized attention variants, chunking strategies, retrieval-augmented architectures, and systems work such as FlashAttention, which improves exact attention by optimizing memory movement rather than changing the mathematical operator itself.

Why These Milestones Define Modern AI

-

Attention replaced static compression with dynamic retrieval.

Sequence models no longer had to squeeze an entire input into one bottleneck vector. -

The Transformer replaced sequential recurrence with hardware-compatible parallel computation.

This made scaling on modern accelerators dramatically more effective. -

ChatGPT turned Transformer-based language modeling into a mass interface.

It did not change the core architecture, but it changed the social, economic, and cultural status of the technology.

Architectural Progression

Fixed context vector

Dynamic attention over encoder states

Fully attention-based sequence modeling

Mass adoption through conversational deployment

Historical Notes and Peculiar Curiosities

The Fixed-Context Bottleneck

The key insight of the Bahdanau model was not simply that “attention helps.” It was that forcing an encoder-decoder system to represent an entire sentence with a single vector is itself an architectural bottleneck.

The Transformer and Hardware

One reason the Transformer became dominant is that its design matches the strengths of modern compute infrastructure. Matrix-heavy parallel computation is a much better fit for GPUs and TPUs than strictly sequential recurrence.

The Noam Schedule

The original Transformer paper also popularized a distinctive learning-rate schedule with warmup followed by inverse-square-root decay. This became widely known as the Noam schedule, and it is one of those small optimization details that quietly shaped an entire generation of training practice.

A More Careful Take on "Reasoning"

It is tempting to say that systems such as ChatGPT can reason, code, tutor, and assist at a human-like level. A more rigorous formulation is that they became broadly useful across many cognitively demanding tasks, even though the nature and limits of their reasoning remain active research questions.

A Quiet Historical Irony

The original Transformer paper was written for machine translation, not for chatbots or general-purpose assistants. One of the most consequential architectures in the history of AI emerged from a problem that now looks narrower than the ecosystem it eventually created.

Logical Progression

- Attention gave sequence models selective access to relevant context.

- Transformers made sequence modeling parallel, scalable, and more effective at long-range dependency handling.

- ChatGPT made large language models accessible, interactive, and socially central.

Final Take-away

The period from 2014 to 2022 reveals a recurring pattern in AI progress:

- a bottleneck is identified,

- an architectural idea removes it,

- and a later system turns that capability into a widely usable technology.

In this trajectory:

- Attention solved the alignment problem inside sequence models.

- The Transformer solved the scalability problem of recurrent computation.

- ChatGPT solved the accessibility problem for large language models.

The broader lesson is not merely that “hardware-friendly algorithms win,” though that is partly true. The stronger lesson is that modern AI advances when architectural ideas, optimization methods, and compute infrastructure reinforce one another.

Selected Primary Sources

- Bahdanau, Cho, Bengio, Neural Machine Translation by Jointly Learning to Align and Translate (2014/ICLR 2015)

- Luong, Pham, Manning, Effective Approaches to Attention-based Neural Machine Translation (EMNLP 2015)

- Vaswani et al., Attention Is All You Need (2017)

- Devlin et al., BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding (2018)

- Brown et al., Language Models are Few-Shot Learners (2020)

- Ouyang et al., Training language models to follow instructions with human feedback (2022)

- Dao et al., FlashAttention: Fast and Memory-Efficient Exact Attention with IO-Awareness (2022)

- OpenAI, OpenAI’s new economic analysis (2025, citing ChatGPT adoption figures)