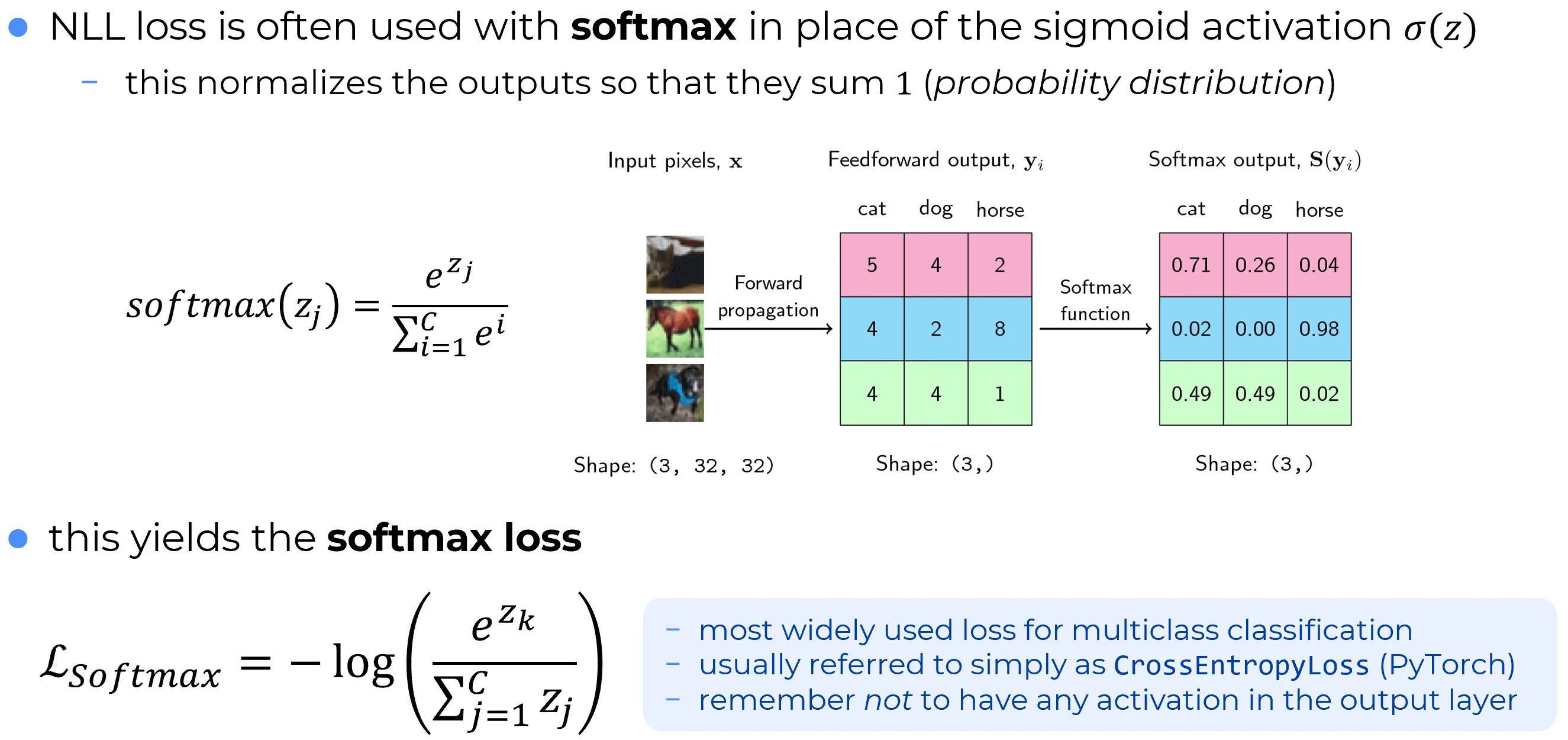

An alternative way to address the learning slowdown problem at the output layer is to replace independent sigmoid outputs with a softmax output layer.

The pre-activation of the -th output neuron is still computed in the usual way:

What changes is the output activation. In a softmax layer, the activation of neuron is defined by

The denominator contains a sum over all output neurons. Consequently, each output depends not only on its own logit , but also on all the others.

Consider a network with four output neurons, with fixed values , , , and varying . The following plot shows how the four softmax activations change as varies.

As increases, the corresponding activation increases, while the other activations decrease. The same phenomenon occurs for any other class: increasing one logit reallocates probability mass toward that class and away from the others.

This behavior follows directly from the defining equation. Indeed,

Moreover, every activation is strictly positive because exponentials are strictly positive. Therefore, the output of a softmax layer is a vector of positive numbers whose sum is . It can therefore be interpreted as a probability distribution over the classes.

Probabilistic Interpretation

In multiclass classification with mutually exclusive classes, it is natural to interpret as the model estimate of

For example, in digit recognition, may be interpreted as the estimated probability that the input image belongs to class .

This interpretation is specific to the softmax output layer. A layer of independent sigmoids does not generally produce outputs that sum to , and therefore does not define a probability distribution over mutually exclusive classes. That setting corresponds instead to multilabel logistic loss, where each class is treated independently.

Why Softmax Produces a Probability Distribution

- The exponential in the numerator guarantees positivity.

- The shared denominator enforces normalization.

- The resulting output can therefore be interpreted as a categorical probability distribution.

Softmax Loss

When a softmax output layer is combined with a one-hot target vector , the standard loss is the negative log-likelihood

If the correct class is , then and all other components vanish, so the loss becomes

This quantity is often called softmax loss. Equivalently,

This second form makes it clear that the loss compares the correct-class logit against the log-normalizer induced by all classes. In modern libraries, it is usually implemented directly from the logits for numerical stability.

Backpropagation with Softmax + Negative Log-Likelihood

Let

Then

This result follows from the chain rule together with the derivative of the softmax:

Indeed,

and therefore

Since for a one-hot target, it follows that

The expression is the key reason why softmax paired with negative log-likelihood avoids the main training pathology associated with saturated output activations. The derivative of the loss with respect to the logits collapses to a simple prediction-minus-target term, without an additional small multiplicative factor analogous to a sigmoid derivative at the output. Once this term is obtained, the rest of backpropagation proceeds exactly as usual. For a fuller discussion of the softmax Jacobian and its structural properties, see Softmax properties.

Logits Versus Probabilities at Evaluation Time

Softmax maps logits from into probabilities in . This is appropriate when a normalized probabilistic interpretation is required, but it also compresses score differences.

For this reason, many ranking-based evaluation metrics are often computed from the logits rather than from the post-softmax probabilities:

- logits are often preferable for ROC curves, AUC, and precision-recall analysis;

- softmax probabilities are preferable when a normalized class distribution is needed.

In practical implementations, this distinction is important:

- during training, frameworks usually accept logits and apply the softmax or log-softmax internally for numerical stability;

- during evaluation, the choice between logits and probabilities depends on the metric and on the desired interpretation.

Note

In libraries such as PyTorch,

CrossEntropyLossexpects logits, not already-normalized probabilities. Mathematically, this still corresponds to softmax followed by negative log-likelihood; the softmax is simply handled inside the loss function in a numerically stable way.

Compressed Score Dynamics: Practical Consequences

Softmax converts arbitrary logits into normalized probabilities, but this normalization also compresses the score scale. As a consequence, different classes may appear more tightly clustered after softmax than they were at the logit level.

This distinction matters in practice:

- during training, softmax plus negative log-likelihood is appropriate because it defines a probabilistic loss;

- during evaluation, logits are often preferable for ranking-based analyses because they preserve a wider score dynamic range.

In ROC or precision-recall analysis, using post-softmax probabilities can sometimes produce a score distribution with less numerical spread than the corresponding logits. In such cases, the resulting curve may look less resolved than the one obtained from raw logits. This is not a universal rule, but it is a common practical reason to retain logits for evaluation while still using softmax-based losses during training.