1. Intro

Definition

Cosine Annealing is a learning-rate scheduling policy in which the learning rate is decreased according to a cosine-shaped curve. Unlike Exponential Decay, which introduces abrupt changes, Cosine Annealing reduces the learning rate smoothly and continuously.

Intuition behind the name

The terminology is inspired by annealing in metallurgy, where a material is cooled gradually so that it can settle into a lower-energy and more stable configuration. The analogy in optimization is the following:

- training begins with a relatively large learning rate, favoring exploration,

- the learning rate is then reduced progressively,

- the late phase of training emphasizes stabilization and fine-grained convergence.

This schedule is widely used because it provides a simple but effective way to transition from coarse exploration to controlled exploitation without introducing discontinuities in the optimization dynamics.

Primary source

The restart-based cosine schedule is associated with: Loshchilov, Ilya, and Frank Hutter. SGDR: Stochastic Gradient Descent with Warm Restarts. ICLR, 2017.

2. Mechanism: cosine decay

Consider a single cosine-decay cycle. The learning rate starts at a maximum value and decreases smoothly toward a minimum value .

The standard closed-form schedule is

Here:

- is the maximum learning rate at the beginning of the cycle,

- is the minimum learning rate reached at the end of the cycle,

- is the current position inside the cycle,

- is the total length of the cosine decay cycle.

Meaning of and

Mathematically, and should be understood as scheduler time units. They are often described informally as “epochs”, but that is only one possible usage pattern. If

scheduler.step()is called once per epoch, then they count epochs. Ifscheduler.step()is called once per iteration, then they count iterations.

This schedule is a half-cosine:

- at the beginning of the cycle, , so and ;

- at the end of the cycle, , so and .

Therefore,

Info

The shape of the decay is nonlinear:

- slow decrease at the beginning,

- faster decrease in the middle,

- slow decrease again near the end.

This is one of the defining practical features of cosine annealing.

Why the cosine shape is interesting

A linear decay reduces the learning rate at a constant rate. Cosine annealing instead reduces it gently at the beginning and at the end. This often gives a more natural transition between:

- a high-learning-rate exploratory regime,

- a low-learning-rate exploitation regime.

Why the decrease is slow-fast-slow

The shape can also be understood analytically. Differentiating the closed-form schedule with respect to scheduler time gives

Since the sine term is:

- close to near the beginning of the cycle,

- largest in magnitude near the middle,

- close to again near the end, the decay is correspondingly:

- slow at the beginning,

- fastest in the middle,

- slow again near the end.

3. Why smooth decay can be useful

3.1 Smooth transition from exploration to exploitation

A major attraction of Cosine Annealing is its smoothness. Abrupt learning-rate drops can produce sudden changes in optimization behavior. A cosine schedule avoids this by modifying the step size continuously.

Compared with piecewise schedules such as step decay, this often yields:

- less abrupt changes in training dynamics,

- a longer high-learning-rate phase,

- a gentler late-stage exploitation.

| Policy | Typical characteristic | Practical implication |

|---|---|---|

| Exponential Decay | The learning rate drops abruptly at predetermined milestones. | Optimization dynamics can change suddenly, and the transition into the low-learning-rate regime may occur earlier or more aggressively than desired. |

| Cosine Annealing | The learning rate decreases smoothly over the entire cycle. | The transition from exploration to exploitation is more gradual, often leading to more stable optimization behavior. |

Careful interpretation

This does not mean that cosine annealing is universally superior. It means that the schedule provides a different trade-off:

- step-based schedules impose explicit regime changes,

- cosine annealing creates a continuous transition between regimes.

3.2 Stochastic-noise viewpoint

Cosine Annealing can also be interpreted from the viewpoint of stochastic optimization dynamics.

In mini-batch methods such as SGD, the learning rate influences the scale of the effective stochasticity induced by batch sampling:

- larger learning rates amplify stochastic exploration,

- smaller learning rates reduce that stochasticity and promote stabilization.

Under this lens:

- the early part of the cosine schedule supports broader exploration,

- the later part progressively reduces the effective noise level,

- the optimizer is gradually guided toward a more stable local region.

Implicit regularization viewpoint

Cosine Annealing may therefore be viewed not only as a learning-rate decay mechanism, but also as a way of modulating the stochastic dynamics of training. This is often connected heuristically to the idea that higher-noise phases encourage exploration of broader basins, while lower-noise phases favor fine convergence.

3.3 Connection with flat minima

This interpretation is often discussed together with the flat-minima hypothesis: solutions lying in wider, lower-curvature regions of the loss landscape are often associated with better generalization.

Note

This connection should be understood as an interpretive heuristic, not as a universally established theorem. Cosine Annealing does not guarantee convergence to a flat minimum. It simply shapes the optimization trajectory in a way that is often regarded as compatible with that hypothesis.

4. Limitation of a single cosine cycle

Despite its advantages, a single cosine-decay cycle still performs only one exploration-to-exploitation trajectory.

Limitation of a single cycle

A single cosine decay may still end in a region that is not the most desirable one reachable within the available compute budget. In nonconvex optimization, the final point may correspond to:

- a mediocre local basin,

- a plateau-like region,

- or a region whose generalization properties are inferior to other reachable solutions.

This motivates the restart-based extension.

5. Cosine annealing with restarts

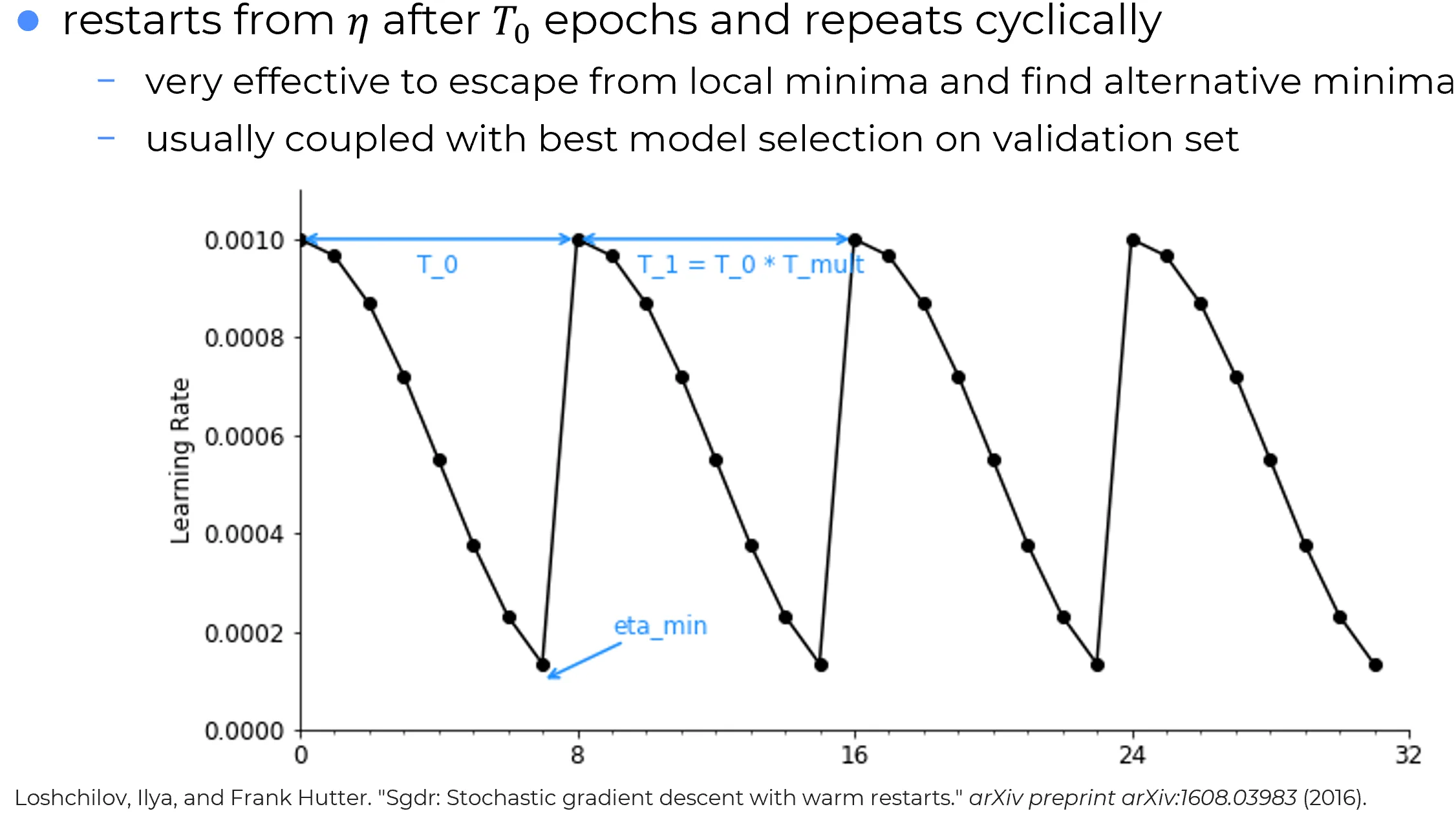

The restart-based variant is commonly known as SGD: Stochastic Gradient Descent with Warm Restarts.

Underlying idea

The central idea is simple: after one cosine cycle ends, the learning rate is reset to a large value and a new cosine cycle begins.

Why they are called warm restarts

The word warm indicates that the optimizer is not reinitialized from scratch. Typically:

- the model parameters are kept,

- the optimizer state is kept,

- only the learning-rate schedule is reset to a high value.

Thus, the training process does not start over from a cold initialization; it resumes from an already trained state but with renewed exploratory step sizes.

Within the current cycle, the schedule is

where:

- is the length of the current cycle,

- is the time elapsed since the last restart.

Thus:

- when , the cycle starts at ,

- when , the cycle reaches .

Effect of restarts

A restart reintroduces a high-learning-rate exploratory phase. This allows the optimizer to leave the neighborhood reached at the end of the previous cycle and to explore a different trajectory through the loss landscape.

5.1 Variable-length cycles

The restart schedule can keep all cycles equally long, or make later cycles longer.

If the cycle lengths are

then one common design is:

- first cycles shorter, to encourage repeated exploration,

- later cycles longer, to allow more extensive exploitation once promising regions have been found.

This is the role of the parameter in PyTorch.

Info

Increasing cycle lengths reflect a natural intuition:

- early in training, frequent restarts encourage exploration,

- later in training, longer cycles devote more compute to exploitation of already promising regions.

6. Practical benefits of restarts

Practical benefits

Cosine Annealing with Restarts combines:

- the smoothness of cosine decay,

- the exploratory benefit of repeated high-learning-rate phases,

- the possibility of revisiting different parts of parameter space over multiple cycles.

Empirically, this can be useful because:

- the optimizer is not restricted to a single decay trajectory,

- restarts can help escape the neighborhood reached at the end of a previous cycle,

- several candidate solutions may be produced over the course of training.

6.1 Model selection across cycles

A practical consequence of restart-based training is that each cycle may produce a distinct candidate model.

Best-model selection with restarts

Since each cycle ends with a low-learning-rate exploitation phase, one practical strategy is:

- Save the model near the end of each cycle.

- Evaluate the saved checkpoints on a validation set.

- Select the checkpoint with the best validation performance.

This can be useful because different cycles may converge to different regions of the loss landscape.

Methodological caution

Model selection should be performed on a validation set, not on the test set. The test set should be reserved for final evaluation only.

6.2 Broader exploration of the loss landscape

Broader exploration

Compared with a single monotone decay schedule, restart-based cosine annealing explores the training dynamics through multiple cycles rather than one single trajectory. This does not guarantee a globally better solution, but it may increase the chance of finding a better-performing region within the available compute budget.

6.3 Computational cost

Practical limitation

Cosine Annealing with Restarts can be computationally expensive. If one cycle already requires many epochs or iterations to produce a well-refined solution, multiple cycles may become prohibitively costly. In practice, this often requires:

- careful budget allocation,

- checkpointing,

- and sometimes early stopping or validation-based truncation.

7. Terminological precision: SGDR and PyTorch

This point deserves special care, because the terminology is often used loosely.

The original SGDR paper introduced cosine annealing together with warm restarts.

PyTorch, however, exposes two different classes:

CosineAnnealingLRCosineAnnealingWarmRestarts

These correspond to two different operational choices:

- a single monotone cosine decay without restarts,

- a cyclic cosine schedule with warm restarts.

Precise naming

The most accurate way to describe the PyTorch schedulers is:

CosineAnnealingWarmRestartsis the restart-based SGDR-style scheduler,CosineAnnealingLRis the no-restart cosine scheduler commonly used in practice.

8. PyTorch implementation details

In PyTorch, both schedulers live in torch.optim.lr_scheduler.

Call order

As stated in the PyTorch documentation,

scheduler.step()should be called afteroptimizer.step().

8.1 Important unit-of-time detail

A common source of confusion is whether the scheduler should be stepped per epoch or per batch.

The correct answer is:

- if

step()is called once per epoch, then scheduler time is measured in epochs; - if

step()is called once per iteration, then scheduler time is measured in iterations.

Therefore, the schedule does not become “too fast” merely because it is stepped per batch. It becomes too fast only if:

step()is called more frequently,- but

T_{\max},T_0, or related parameters are still chosen as if they counted epochs.

Note

The scheduler is correct in either regime. What matters is that the time scale of

step()and the time scale encoded inT_{\max}orT_0are consistent with each other.

Common implementation mistakes

The most frequent practical mistakes are:

- calling

scheduler.step()beforeoptimizer.step(),- stepping the scheduler once per batch while choosing

T_{\max}orT_0as if they counted epochs,- expecting

CosineAnnealingLRto perform restarts automatically,- using restart cycles to select a model on the test set instead of the validation set.

9. PyTorch: CosineAnnealingLR

9.1 What it implements

CosineAnnealingLR implements the standard cosine annealing schedule without restarts.

In PyTorch, the maximum learning rate is implicitly given by the optimizer’s initial learning rate, i.e. by the corresponding base_lr.

The PyTorch documentation states that the learning rate is updated recursively as

This is the recursive implementation used internally.

PyTorch also states that this recursively implements the closed-form schedule proposed in SGDR:

where:

- is the learning rate at step ,

- is the number of scheduler steps since the start of the schedule,

- is the maximum number of scheduler steps in the cycle.

Closed-form vs. recursive PyTorch update

Two formulas appear in the PyTorch documentation because they play different roles:

the closed-form expression

is the conceptual target schedule;

the recursive expression

is the stateful update rule used by the implementation.

The practical interpretation is:

- the closed form tells what schedule is being approximated,

- the recursive formula tells how PyTorch updates the current learning rate from one step to the next.

Thus, the two formulas are not competing definitions. They describe the same scheduler at two different levels:

- one as an ideal schedule,

- the other as an implementation rule.

PyTorch-specific interpretation

Although the SGDR paper includes restarts,

CosineAnnealingLRperforms cosine annealing without restarts. Therefore:and it increases monotonically with each call to

step().

9.2 Meaning of T_max in PyTorch

According to the PyTorch documentation:

T_max (int)= maximum number of iterations

This is the most precise practical interpretation.

If the scheduler is stepped once per epoch, then T_max is effectively the number of epochs.

If it is stepped once per batch, then T_max should be set in iterations.

9.3 Epoch-based example

import torch

import torch.optim as optim

from torch.optim.lr_scheduler import CosineAnnealingLR

import matplotlib.pyplot as plt

# Dummy model and optimizer

model = torch.nn.Linear(10, 2)

optimizer = optim.SGD(model.parameters(), lr=0.1) # eta_max = 0.1

# Here step() is called once per epoch, so T_max is measured in epochs

scheduler = CosineAnnealingLR(optimizer, T_max=100, eta_min=0.01)

lrs = []

for epoch in range(100):

# train(...)

# validate(...)

optimizer.step()

lrs.append(optimizer.param_groups[0]["lr"])

scheduler.step()

plt.figure(figsize=(10, 6))

plt.plot(lrs, marker="o")

plt.title("CosineAnnealingLR: Single Decay Cycle")

plt.xlabel("Epoch")

plt.ylabel("Learning Rate")

plt.grid(True)

plt.show()9.4 Iteration-based interpretation

If step() is called once per mini-batch, then T_max must be expressed in iterations, not epochs.

For example, if:

- training lasts 100 epochs,

- each epoch has 500 mini-batches,

then a full cosine cycle over the entire training run would require

10. PyTorch: CosineAnnealingWarmRestarts

10.1 What it implements

CosineAnnealingWarmRestarts implements the restart-based cosine schedule associated with SGDR.

In PyTorch, is again determined by the optimizer’s initial learning rate (base_lr for each parameter group).

PyTorch defines the schedule within the current cycle as

where:

- is the initial learning rate,

- is the number of scheduler steps since the last restart,

- is the number of scheduler steps between two restarts.

At the restart boundaries:

- when , the schedule reaches ;

- when the next cycle starts and , the learning rate is reset to .

10.2 Main PyTorch parameters

Key parameters

As specified in the PyTorch documentation:

T_0(int): number of iterations until the first restart,T_mult(int, optional): factor by which the cycle length grows after each restart,eta_min(float, optional): minimum learning rate.

For example:

T_0 = 20,T_mult = 1means all cycles last 20 scheduler steps,T_0 = 20,T_mult = 2means cycle lengths are 20, 40, 80, …

10.3 Epoch-based example

import torch

import torch.optim as optim

from torch.optim.lr_scheduler import CosineAnnealingWarmRestarts

import matplotlib.pyplot as plt

# Dummy model and optimizer

model = torch.nn.Linear(10, 2)

optimizer = optim.SGD(model.parameters(), lr=0.1) # eta_max = 0.1

# Here step() is called once per epoch, so T_0 is measured in epochs

scheduler = CosineAnnealingWarmRestarts(

optimizer,

T_0=20,

T_mult=2,

eta_min=0.01,

)

lrs = []

for epoch in range(140):

# train(...)

# validate(...)

optimizer.step()

lrs.append(optimizer.param_groups[0]["lr"])

scheduler.step()

plt.figure(figsize=(10, 6))

plt.plot(lrs, marker="o")

plt.title("CosineAnnealingWarmRestarts: Cyclic Decay")

plt.xlabel("Epoch")

plt.ylabel("Learning Rate")

plt.grid(True)

plt.show()10.4 Batch-level stepping is explicitly supported

A crucial detail from the PyTorch documentation is that CosineAnnealingWarmRestarts.step() can be called after every batch update.

PyTorch even provides the following style of usage:

scheduler = CosineAnnealingWarmRestarts(optimizer, T_0, T_mult)

iters = len(dataloader)

for epoch in range(20):

for i, sample in enumerate(dataloader):

inputs, labels = sample["inputs"], sample["labels"]

optimizer.zero_grad()

outputs = net(inputs)

loss = criterion(outputs, labels)

loss.backward()

optimizer.step()

scheduler.step(epoch + i / iters)This means that for CosineAnnealingWarmRestarts:

- scheduler time can be tracked at sub-epoch resolution,

- fractional epochs can be used,

- the cosine schedule can be made much smoother in iteration space.

Info

This is one of the most important practical distinctions between the abstract schedule and the implementation: the same mathematical scheduler can be indexed either by epochs or by iterations, as long as the interpretation of

T_0,T_i, and is kept consistent.

11. Comparison with step-based decay

The choice between cosine annealing and step-based decay is not merely aesthetic. It reflects a choice about how aggressively the optimization regime should change over time.

When cosine annealing is often preferable to step decay

Cosine annealing is often the cleaner choice when:

- abrupt learning-rate transitions are undesirable,

- a long training horizon is available,

- a gradual exploitation phase is preferred,

- one wants to avoid manual placement of decay milestones.

Step decay may still be preferable when:

- specific milestone epochs are already known from prior experiments,

- a simpler and more manually controllable schedule is preferred,

- exact regime changes are desired rather than a continuous transition.

Note

Neither family dominates universally. In practice, the better schedule depends on the optimizer, model architecture, training budget, and the sensitivity of the task to late-stage learning-rate behavior.

12. Scope of the stochastic-noise intuition

The stochastic-noise interpretation given above is especially natural for SGD-like optimizers, where mini-batch noise plays an explicit conceptual role.

Scope of that intuition

Cosine annealing can also be used with adaptive optimizers such as Adam or AdamW. However, the “learning rate as direct control of SGD noise” interpretation is most transparent in SGD-like settings. With adaptive optimizers, the effective optimization dynamics depend not only on the scheduler but also on the optimizer’s internal rescaling mechanism.

13. Practical guidance

When cosine annealing is a natural choice

Cosine Annealing is often a good option when:

- a smooth monotone decay is preferred over abrupt milestones,

- a long training run is available,

- stable late-stage exploitation is important,

- a schedule with minimal manual milestone design is desired.

When restarts are worth considering

Cosine Annealing with Restarts is worth considering when:

- the compute budget is large enough to support multiple cycles,

- several candidate checkpoints are desired,

- repeated exploratory phases are considered beneficial,

- the task is known to benefit from restart-based schedules.

When caution is needed

These schedules are not automatically optimal. Care is especially needed when:

- the training budget is short,

- the cycle length is poorly calibrated,

- the scheduler time unit is inconsistent with the stepping frequency,

- the restart schedule becomes too expensive relative to the performance gain.

14. Summary

Cosine Annealing is a smooth learning-rate decay policy based on a half-cosine schedule. Its key appeal lies in the fact that it provides a gradual transition from high-learning-rate exploration to low-learning-rate exploitation.

Its restart-based extension, SGDR-style cosine annealing with warm restarts, repeats this process across multiple cycles by periodically resetting the learning rate to a high value.

The most important practical points are:

CosineAnnealingLRimplements cosine decay without restarts;CosineAnnealingWarmRestartsimplements the restart-based variant;T_{\max},T_0, and related quantities count scheduler steps, not intrinsically epochs;- stepping per epoch or per batch is both valid, provided the scheduler parameters are chosen in the corresponding time unit;

- model selection across cycles should be done on a validation set, not on the test set.

Final takeaway

Cosine Annealing is widely used because it is simple, smooth, and often effective empirically. Warm restarts extend the same idea by reintroducing exploration periodically. Their effectiveness depends not only on the formula, but on choosing the cycle length and stepping granularity consistently with the actual training pipeline.