Solution to the learning slowdown induced by Gaussian initialization of neural-network parameters

Suppose we consider a neuron in the first hidden layer , with input weights (see the two green layers in the previous figure).

Important

Each weight is initialized so that:

The idea is to compress the Gaussian proportionally to the number of inputs :

In other words, the Gaussians are compressed, making it less likely that the neuron saturates. Moreover, the bias is still chosen as a Gaussian random variable with mean and standard deviation .

With these choices, the net input to the neuron, , is again a Gaussian random variable (since Gaussian random variables are closed under linear transformations) with mean , but it is much more concentrated (that is, it has smaller variance) than in the previous case.

Important

A neuron initialized in this way is much less likely to saturate and, consequently, is much less subject to learning slowdown.

Note

The bias is still initialized as . This approach is acceptable, since it does not significantly increase the probability that neurons enter saturation. In reality, the way biases are initialized is not particularly critical, provided that saturation is avoided. Some even initialize all biases to , relying on gradient descent to learn appropriate values afterward. However, since the choice of bias initialization has negligible impact, one initializes

Example

Suppose, as in the previous discussion, that of the inputs are zero and are one. Then it is easy to prove that (see the derivation below).

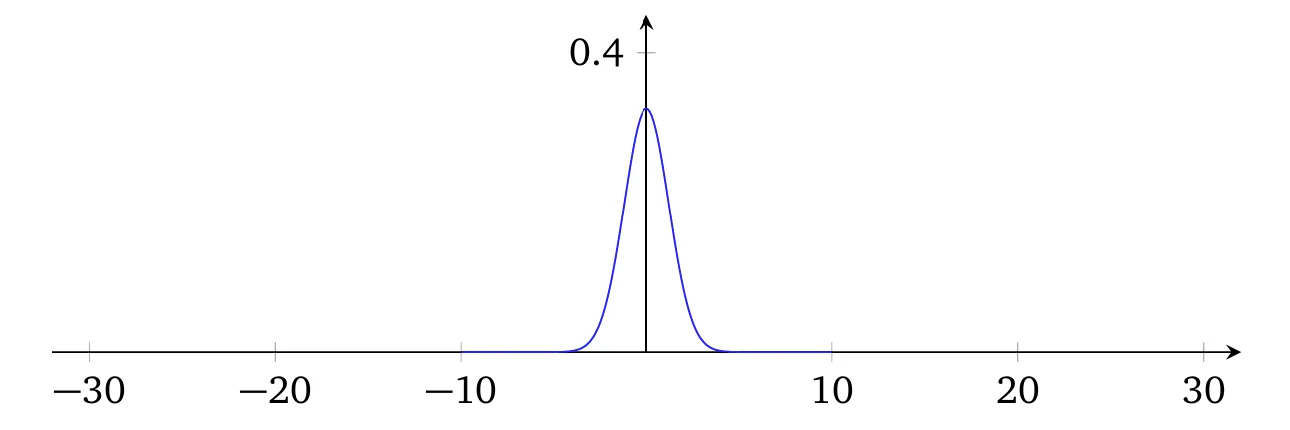

As can be observed, the distribution of the net input of the neuron under consideration is much more peaked than before, to the point that even the plot shown below does not fully convey the situation, since the vertical axis had to be rescaled with respect to the previous plot.

Rigorous proof that

Key assumption: The weights and the bias are i.i.d. random variables.

Contribution of the active weights ():

- For the 500 active inputs, the partial sum is .

- Since the are i.i.d.:

Contribution of the bias :

Total variance of (by independence between weights and bias):

The fundamental property is the additivity of variances under independence, applied both to the weights and to the bias.

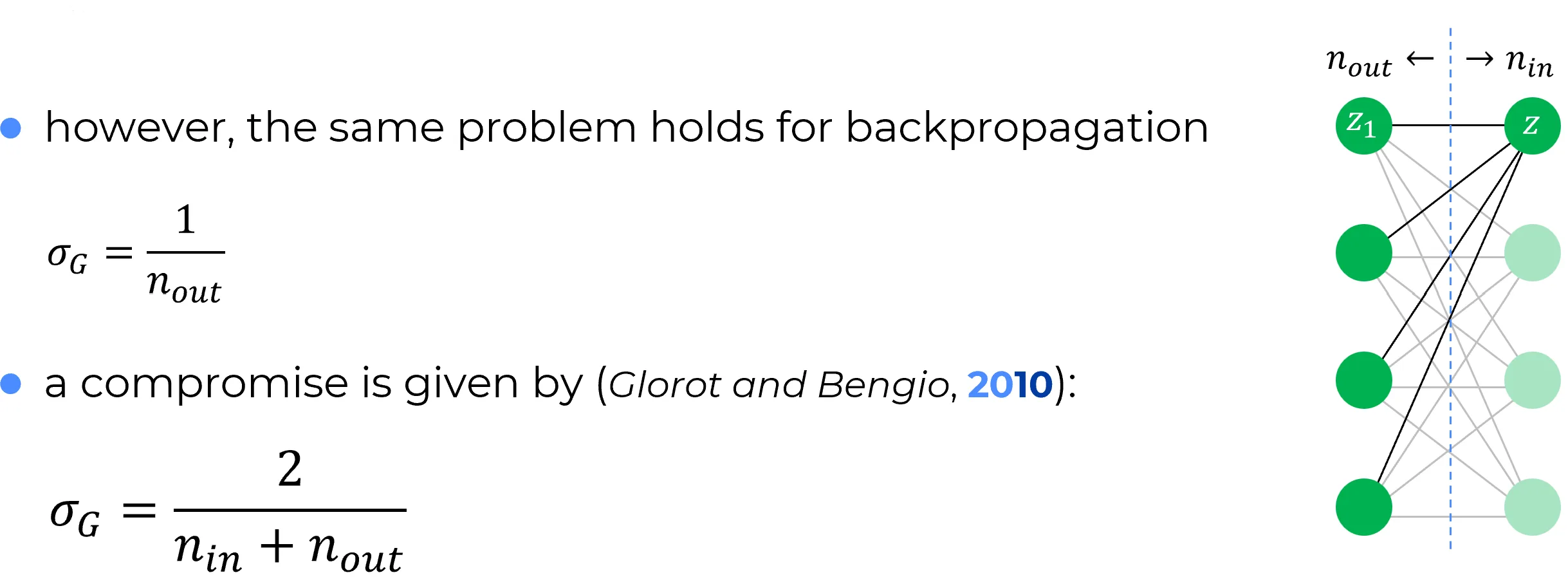

The neural network, during training, is also used from right to left by virtue of back-propagation.

It is therefore legitimate to reinterpret what was the first hidden layer as an “input” layer, and what was the input layer as the first hidden layer (see the following figure).

Following the same idea discussed above (and viewing the network from right to left), each weight associated with the output connections of neuron can be initialized as follows:

where is the number of output connections (see the following note) of neuron in the following figure.

Note

The expression output connections of neuron implies reading the neural network from left to right, in feed-forward terms. Equivalently, one could use the expression incoming connections of neuron , which instead implies reading the network from right to left, in back-propagation terms.

🔄

In the previous discussion, the analysis of the weight-initialization problem in the feedforward phase (from left to right) was separated from the analysis of the same problem in the backpropagation phase (from right to left), considering two layers in both scenarios.

However, the same reasoning could have been applied mutatis mutandis by considering a network with three layers and focusing, for example, on a neuron in the intermediate layer.

Xavier (Glorot-Bengio) initialization

The initialization proposed by Xavier, Glorot, and Bengio states that the weights are drawn from a normal distribution with:

✅ Each neuron in layer initializes only the weights of its incoming connections (that is, those coming from layer ), but the variance used for initialization also takes into account how many outgoing connections (toward layer ) that neuron has.

Notation used:

- : number of neurons in the previous layer → connections entering the neuron

- : number of neurons in the next layer → connections leaving the neuron (from its point of view)

The proposed variance is therefore a compromise that takes into account both the incoming and outgoing connections of a neuron.

Moreover, the biases associated with each neuron are initialized separately:

that is, by sampling from a standard Gaussian, independently of the weights.

He Initialization (Kaiming Initialization)

While Xavier (or Glorot) initialization is effective for symmetric activation functions that are linear around the origin (such as the hyperbolic tangent or the identity function), it proves inadequate for non-symmetric, non-saturating functions, particularly the Rectified Linear Unit (ReLU).

The ReLU function, defined as , sets all negative inputs to zero. This leads to two fundamental statistical consequences that violate Xavier’s assumptions:

- Non-zero mean: The output of a ReLU always has a positive mean.

- Variance halving: Assuming zero-centered inputs, ReLU “turns off” roughly half the neurons, drastically reducing the signal variance as it propagates through the network.

Therefore, using Xavier with ReLU leads to a progressive decay of variance (vanishing signal), making the training of deep networks difficult.

The Solution: He Initialization

Proposed by He et al. (2015), this initialization is specifically designed to maintain the variance of activations stable across layers in networks based on ReLU (or variants like Leaky ReLU).

Weights are drawn from a normal distribution with zero mean and a variance calibrated to compensate for the “halving” effect of ReLU:

Rationale behind the variance

- : As with Xavier, the variance is inversely proportional to the number of inputs to normalize the weighted sum.

- Factor of 2: This is the crucial adjustment for ReLU. Since ReLU zeroes out half the inputs (reducing variance by a factor of ), it is necessary to multiply the weight variance by 2 to restore the original signal level.

Mathematical Justification

The goal is to preserve activation variance from one layer to the next, i.e., ensuring that .

Let us consider a neuron in layer . Its pre-activation input is . We assume:

- The weights and inputs are independent and identically distributed (i.i.d.) random variables.

- The weights have zero mean () and are symmetric.

- The bias is initialized to zero ().

The variance of the pre-activation output is:

Since and are independent and , the variance of the product is the product of the variances:

Here the effect of ReLU comes into play. The input is the output of the previous layer’s ReLU (). If we assume that has a symmetric distribution around zero, ReLU suppresses half the values. Therefore, the second moment (signal power) is halved:

Substituting this relation into the variance equation:

For the signal not to explode or vanish, we want the variance to be preserved, i.e., . Imposing this equality:

Bias Initialization

Unlike the general theoretical case, when using ReLU activations it is standard practice to initialize biases to zero (or to a very small positive constant, e.g. 0.01, to avoid dead neurons, although 0 is the most common default).

Note on Biases

A Gaussian distribution is not used for biases (e.g. ), since it would add unnecessary variance and risk starting ReLU neurons in an “off” state (dead ReLU) if the initial value were strongly negative.